Purdue Online Writing Lab Purdue OWL® College of Liberal Arts

In-Text Citations: The Basics

Welcome to the Purdue OWL

This page is brought to you by the OWL at Purdue University. When printing this page, you must include the entire legal notice.

Copyright ©1995-2018 by The Writing Lab & The OWL at Purdue and Purdue University. All rights reserved. This material may not be published, reproduced, broadcast, rewritten, or redistributed without permission. Use of this site constitutes acceptance of our terms and conditions of fair use.

Note: This page reflects the latest version of the APA Publication Manual (i.e., APA 7), which released in October 2019. The equivalent resource for the older APA 6 style can be found here .

Reference citations in text are covered on pages 261-268 of the Publication Manual. What follows are some general guidelines for referring to the works of others in your essay.

Note: On pages 117-118, the Publication Manual suggests that authors of research papers should use the past tense or present perfect tense for signal phrases that occur in the literature review and procedure descriptions (for example, Jones (1998) found or Jones (1998) has found ...). Contexts other than traditionally-structured research writing may permit the simple present tense (for example, Jones (1998) finds ).

APA Citation Basics

When using APA format, follow the author-date method of in-text citation. This means that the author's last name and the year of publication for the source should appear in the text, like, for example, (Jones, 1998). One complete reference for each source should appear in the reference list at the end of the paper.

If you are referring to an idea from another work but NOT directly quoting the material, or making reference to an entire book, article or other work, you only have to make reference to the author and year of publication and not the page number in your in-text reference.

On the other hand, if you are directly quoting or borrowing from another work, you should include the page number at the end of the parenthetical citation. Use the abbreviation “p.” (for one page) or “pp.” (for multiple pages) before listing the page number(s). Use an en dash for page ranges. For example, you might write (Jones, 1998, p. 199) or (Jones, 1998, pp. 199–201). This information is reiterated below.

Regardless of how they are referenced, all sources that are cited in the text must appear in the reference list at the end of the paper.

In-text citation capitalization, quotes, and italics/underlining

- Always capitalize proper nouns, including author names and initials: D. Jones.

- If you refer to the title of a source within your paper, capitalize all words that are four letters long or greater within the title of a source: Permanence and Change . Exceptions apply to short words that are verbs, nouns, pronouns, adjectives, and adverbs: Writing New Media , There Is Nothing Left to Lose .

( Note: in your References list, only the first word of a title will be capitalized: Writing new media .)

- When capitalizing titles, capitalize both words in a hyphenated compound word: Natural-Born Cyborgs .

- Capitalize the first word after a dash or colon: "Defining Film Rhetoric: The Case of Hitchcock's Vertigo ."

- If the title of the work is italicized in your reference list, italicize it and use title case capitalization in the text: The Closing of the American Mind ; The Wizard of Oz ; Friends .

- If the title of the work is not italicized in your reference list, use double quotation marks and title case capitalization (even though the reference list uses sentence case): "Multimedia Narration: Constructing Possible Worlds;" "The One Where Chandler Can't Cry."

Short quotations

If you are directly quoting from a work, you will need to include the author, year of publication, and page number for the reference (preceded by "p." for a single page and “pp.” for a span of multiple pages, with the page numbers separated by an en dash).

You can introduce the quotation with a signal phrase that includes the author's last name followed by the date of publication in parentheses.

If you do not include the author’s name in the text of the sentence, place the author's last name, the year of publication, and the page number in parentheses after the quotation.

Long quotations

Place direct quotations that are 40 words or longer in a free-standing block of typewritten lines and omit quotation marks. Start the quotation on a new line, indented 1/2 inch from the left margin, i.e., in the same place you would begin a new paragraph. Type the entire quotation on the new margin, and indent the first line of any subsequent paragraph within the quotation 1/2 inch from the new margin. Maintain double-spacing throughout, but do not add an extra blank line before or after it. The parenthetical citation should come after the closing punctuation mark.

Because block quotation formatting is difficult for us to replicate in the OWL's content management system, we have simply provided a screenshot of a generic example below.

Formatting example for block quotations in APA 7 style.

Quotations from sources without pages

Direct quotations from sources that do not contain pages should not reference a page number. Instead, you may reference another logical identifying element: a paragraph, a chapter number, a section number, a table number, or something else. Older works (like religious texts) can also incorporate special location identifiers like verse numbers. In short: pick a substitute for page numbers that makes sense for your source.

Summary or paraphrase

If you are paraphrasing an idea from another work, you only have to make reference to the author and year of publication in your in-text reference and may omit the page numbers. APA guidelines, however, do encourage including a page range for a summary or paraphrase when it will help the reader find the information in a longer work.

Appropriate Level of Citation

The number of sources you cite in your paper depends on the purpose of your work. For most papers, cite one or two of the most representative sources for each key point. Literature review papers, however, typically include a more exhaustive list of references.

Provide appropriate credit to the source (e.g., by using an in-text citation) whenever you do the following:

- paraphrase (i.e., state in your own words) the ideas of others

- directly quote the words of others

- refer to data or data sets

- reprint or adapt a table or figure, even images from the internet that are free or licensed in the Creative Commons

- reprint a long text passage or commercially copyrighted test item

Avoid both undercitation and overcitation. Undercitation can lead to plagiarism and/or self-plagiarism . Overcitation can be distracting and is unnecessary.

For example, it is considered overcitation to repeat the same citation in every sentence when the source and topic have not changed. Instead, when paraphrasing a key point in more than one sentence within a paragraph, cite the source in the first sentence in which it is relevant and do not repeat the citation in subsequent sentences as long as the source remains clear and unchanged.

Figure 8.1 in Chapter 8 of the Publication Manual provides an example of an appropriate level of citation.

Determining the appropriate level of citation is covered in the seventh edition APA Style manuals in the Publication Manual Section 8.1 and the Concise Guide Section 8.1

Related handouts

- In-Text Citation Checklist (PDF, 227KB)

- Six Steps to Proper Citation (PDF, 112KB)

From the APA Style blog

How to cite your own translations

If you translate a passage from one language into another on your own in your paper, your translation is considered a paraphrase, not a direct quotation.

Key takeaways from the Psi Chi webinar So You Need to Write a Literature Review

This blog post describes key tasks in writing an effective literature review and provides strategies for approaching those tasks.

How to cite a work with a nonrecoverable source

In most cases, nonrecoverable sources such as personal emails, nonarchived social media livestreams (or deleted and unarchived social media posts), classroom lectures, unrecorded webinars or presentations, and intranet sources should be cited only in the text as personal communications.

The “outdated sources” myth

The “outdated sources” myth is that sources must have been published recently, such as the last 5 to 10 years. There is no timeliness requirement in APA Style.

From COVID-19 to demands for social justice: Citing contemporary sources for current events

The guidance in the seventh edition of the Publication Manual makes the process of citing contemporary sources found online easier than ever before.

Citing classical and religious works

A classical or religious work is cited as either a book or a webpage, depending on what version of the source you are using. This post includes details and examples.

Academic Writer—APA’s essential teaching resource for higher education instructors

Academic Writer’s advanced authoring technology and digital learning tools allow students to take a hands-on approach to learning the scholarly research and writing process.

APA Style webinar on citing works in text

Attend the webinar, “Citing Works in Text Using Seventh Edition APA Style,” on July 14, 2020, to learn the keys to accurately and consistently citing sources in APA Style.

- Joyner Library

- Laupus Health Sciences Library

- Music Library

- Digital Collections

- Special Collections

- North Carolina Collection

- Teaching Resources

- The ScholarShip Institutional Repository

- Country Doctor Museum

APA Citation Style, 7th Edition: In-Text Citations & Paraphrasing

- APA 6/7 Comparison Guide

- New & Notable Changes

- Student Paper Layout

- Journal Article with One Author

- Journal Article with Two Authors

- Journal Article with Three or more Authors

- Help?! I can't find the DOI

- One Author/Editor

- Two Authors/Editors

- Chapter in a Book

- Electronic Books

- Social Media Posts

- YouTube or other streaming video

- Podcast or other audio works

- Infographic, Powerpoint, or other visual works

- Government Websites & Publications, & Gray Literature

- Legislative (US & State House & Senate) Bills

- StatPearls, UpToDate, DynaMedex

- Dissertations & Thesis

- Interviews & Emails

- Magazine Articles

- Newspaper Articles

- Datasets, Software, & Tests

- Posters & Conference Sessions

- Photographs, Tables, & PDF's

- Canvas Posts & Class Discussion Boards

- In-Text Citations & Paraphrasing

- References Page

- Free APA 7th edition Resources, Handouts, & Tutorials

When do I use in-text citations?

When should you add in-text citations in your paper .

There are several rules of thumb you can follow to make sure that you are citing your paper correctly in APA 7 format.

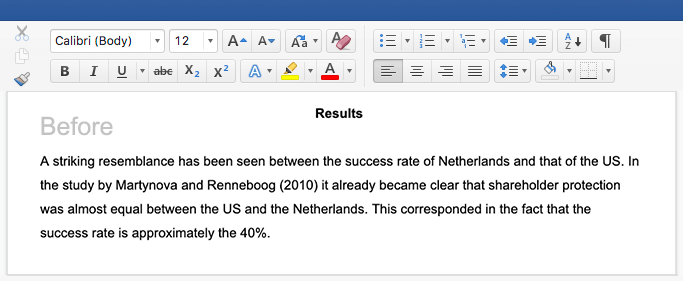

- Think of your paper broken up into paragraphs. When you start a paragraph, the first time you add a sentence that has been paraphrased from a reference -> that's when you need to add an in-text citation.

- Continue writing your paragraph, you do NOT need to add another in-text citation until: 1) You are paraphrasing from a NEW source, which means you need to cite NEW information OR 2) You need to cite a DIRECT quote, which includes a page number, paragraph number or Section title.

- Important to remember : You DO NOT need to add an in-text citation after EVERY sentence of your paragraph.

What do in-text citations look like?

In-text citation styles: .

| (Forbes, 2020) | Forbes (2020) stated... | |

| (Bennet & Miller, 2019) | Bennet and Miller (2019) concluded that... | |

| (Jones et al., 2020) | Jones et al. (2020) shared two different... | |

| (East Carolina University, 2020) | East Carolina University (2020) found... |

Let's look at these examples if they were written in text:

An example with 1 author:

Parenthetical citation: Following American Psychological Association (APA) style guidelines will help you to cultivate your own unique academic voice as an expert in your field (Forbes, 2020).

Narrative citation : Forbes (2020) shared that by following American Psychological Association (APA) guidelines, students would learn to find their own voice as experts in the field of nursing.

An example with 2 authors:

Parenthetical citation: Research on the use of progressive muscle relaxation for stress reduction has demonstrated the efficacy of the method (Bennett & Miller, 2019).

Narrative citation: As shared by Bennett and Miller (2019), research on the use of progressive muscle relaxation for stress reduction has demonstrated the efficacy of the method.

An example with 3 authors:

Parenthetical citation: Guided imagery has also been shown to reduce stress, length of hospital stay, and symptoms related to medical and psychological conditions (Jones et al., 2020).

Narrative citation: Jones et al. (2020) shared that guided imagery has also been shown to reduce stress, length of hospital stay, and symptoms related to medical and psychological conditions.

An example with a group/corporate author:

Parenthetical citation: Dr. Philip G. Rogers, senior vice president at the American Council on Education, was recently elected as the newest chancellor of the university (East Carolina University, 2020).

Narrative citation: Recently shared on the East Carolina University (2020) website, Dr. Philip G. Rogers, senior vice president at the American Council on Education, was elected as the newest chancellor.

Tips on Paraphrasing

Paraphrasing is recreating someone else's ideas into your own words & thoughts, without changing the original meaning (gahan, 2020). .

Here are some best practices when you are paraphrasing:

- How do I learn to paraphrase? IF you are thoroughly reading and researching articles or book chapters for a paper, you will start to take notes in your own words . Those notes are the beginning of paraphrased information.

- Read the original information, PUT IT AWAY, then rewrite the ideas in your own words . This is hard to do at first, it takes practice, but this is how you start to paraphrase.

- It's usually better to paraphrase, than to use too many direct quotes.

- When you start to paraphrase, cite your source.

- Make sure not to use language that is TOO close to the original, so that you are not committing plagiarism.

- Use theasaurus.com to help you come up with like/similar phrases if you are struggling.

- Paraphrasing (vs. using direct quotes) is important because it shows that YOU ACTUALLY UNDERSTAND the information you are reading.

- Paraphrasing ALLOWS YOUR VOICE to be prevalent in your writing.

- The best time to use direct quotes is when you need to give an exact definition, provide specific evidence, or if you need to use the original writer's terminology.

- BEST PRACTICE PER PARAGRAPH: On your 1st paraphrase of a source, CITE IT. There is no need to add another in-text citation until you use a different source, OR, until you use a direct quote.

References :

Gahan, C. (2020, October 15). How to paraphrase sources . Scribbr.com . https://tinyurl.com/y7ssxc6g

Citing Direct Quotes

When should i use a direct quote in my paper .

Direct quotes should only be used occasionally:

- When you need to share an exact definition

- When you want to provide specific evidence or information that cannot be paraphrased

- When you want to use the original writer's terminology

From: https://americanlibrariesmagazine.org/whaddyamean/

Definitions of direct quotes:

| , around the quote, are incorporated into the text of the paper. | (Shayden, 2016, p. 202) | |

| (by indenting 0.5" or 1 tab) beneath the text of the paragraph. | (Miller et al., 2016, p. 136) | |

| , therefore you need a different way to cite the information for a direct quote. There are two ways to do this: | (Jones, 2014, para. 4) (Scotts, 2019, Resources section) |

- Western Oregon University's APA Guidelines on Direct Quotes This is an excellent quick tutorial on how to format direct quotes in APA 7th edition. Bookmark this page for future reference!

Carrie Forbes, MLS

Chat with a Librarian

Chat with a librarian is available during Laupus Library's open hours .

Need to contact a specific librarian? Find your liaison.

Call us: 1-888-820-0522 (toll free)

252-744-2230

Text us: 252-303-2343

- << Previous: Canvas Posts & Class Discussion Boards

- Next: References Page >>

- Last Updated: Jan 12, 2024 10:05 AM

- URL: https://libguides.ecu.edu/APA7

APA Style (7th ed.)

- Cite: Why? When?

- Book, eBook, Dissertation

- Article or Report

- Business Sources

- Artificial Intelligence (AI) Tools

- In-Text Citation

- Format Your Paper

Learn why citing with APA matters

Watch the following video: .

How to cite in APA Style (7th edition)

(Looking for the old 6th edition guide?)

Most academic writing cites others' ideas and research, for several reasons:

- Sources that support your ideas give your paper authority and credibility

- Shows you have researched your topic thoroughly

- Crediting sources protects you from plagiarism

- A list of sources can be a useful record for further research

Different academic disciplines prefer different citation styles, most commonly APA and MLA styles.

Besides these styles, there are Chicago , Turabian , AAA , AP , and more. Only use the most current edition of the citation style.

Ask your instructors which citation style they want you to use for assignments.

Prefer an interactive, video-based tutorial? Click the image below:

More questions? Check out the authoritative source: APA style blog

When to cite.

To avoid plagiarism, provide a citation for ideas that are not your own:

- Direct quotation

- Paraphrasing of a quotation, passage, or idea

- Summary of another's idea or research

- Specific reference to a fact, figure, or phrase

You do not need to cite common knowledge (ex. George Washington was the first President of the United States) or proverbs unless you are using a direct quotation. When in doubt, cite your source.

- Next: Reference Examples >>

- Last Updated: Jun 17, 2024 12:51 PM

- URL: https://libguides.uww.edu/apa

University Library

Start your research.

- Research Process

- Find Background Info

- Find Sources through the Library

- Evaluate Your Info

- Cite Your Sources

- Evaluate, Write & Cite

- is the right thing to do to give credit to those who had the idea

- shows that you have read and understand what experts have had to say about your topic

- helps people find the sources that you used in case they want to read more about the topic

- provides evidence for your arguments

- is professional and standard practice for students and scholars

What is a Citation?

A citation identifies for the reader the original source for an idea, information, or image that is referred to in a work.

- In the body of a paper, the in-text citation acknowledges the source of information used.

- At the end of a paper, the citations are compiled on a References or Works Cited list. A basic citation includes the author, title, and publication information of the source.

From: Lemieux Library, University of Seattle

Why Should You Cite?

Quoting Are you quoting two or more consecutive words from a source? Then the original source should be cited and the words or phrase placed in quotes.

Paraphrasing If an idea or information comes from another source, even if you put it in your own words , you still need to credit the source. General vs. Unfamiliar Knowledge You do not need to cite material which is accepted common knowledge. If in doubt whether your information is common knowledge or not, cite it. Formats We usually think of books and articles. However, if you use material from web sites, films, music, graphs, tables, etc. you'll also need to cite these as well.

Plagiarism is presenting the words or ideas of someone else as your own without proper acknowledgment of the source. When you work on a research paper and use supporting material from works by others, it's okay to quote people and use their ideas, but you do need to correctly credit them. Even when you summarize or paraphrase information found in books, articles, or Web pages, you must acknowledge the original author.

Citation Style Help

Helpful links:

- MLA , Works Cited : A Quick Guide (a template of core elements)

- CSE (Council of Science Editors)

For additional writing resources specific to styles listed here visit the Purdue OWL Writing Lab

Citation and Bibliography Resources

- How to Write an Annotated Bibliography

- << Previous: Evaluate Your Info

- Next: Evaluate, Write & Cite >>

Creative Commons Attribution 3.0 License except where otherwise noted.

Land Acknowledgement

The land on which we gather is the unceded territory of the Awaswas-speaking Uypi Tribe. The Amah Mutsun Tribal Band, comprised of the descendants of indigenous people taken to missions Santa Cruz and San Juan Bautista during Spanish colonization of the Central Coast, is today working hard to restore traditional stewardship practices on these lands and heal from historical trauma.

The land acknowledgement used at UC Santa Cruz was developed in partnership with the Amah Mutsun Tribal Band Chairman and the Amah Mutsun Relearning Program at the UCSC Arboretum .

Home / Guides / Citation Guides / How to Cite Sources

How to Cite Sources

Here is a complete list for how to cite sources. Most of these guides present citation guidance and examples in MLA, APA, and Chicago.

If you’re looking for general information on MLA or APA citations , the EasyBib Writing Center was designed for you! It has articles on what’s needed in an MLA in-text citation , how to format an APA paper, what an MLA annotated bibliography is, making an MLA works cited page, and much more!

MLA Format Citation Examples

The Modern Language Association created the MLA Style, currently in its 9th edition, to provide researchers with guidelines for writing and documenting scholarly borrowings. Most often used in the humanities, MLA style (or MLA format ) has been adopted and used by numerous other disciplines, in multiple parts of the world.

MLA provides standard rules to follow so that most research papers are formatted in a similar manner. This makes it easier for readers to comprehend the information. The MLA in-text citation guidelines, MLA works cited standards, and MLA annotated bibliography instructions provide scholars with the information they need to properly cite sources in their research papers, articles, and assignments.

- Book Chapter

- Conference Paper

- Documentary

- Encyclopedia

- Google Images

- Kindle Book

- Memorial Inscription

- Museum Exhibit

- Painting or Artwork

- PowerPoint Presentation

- Sheet Music

- Thesis or Dissertation

- YouTube Video

APA Format Citation Examples

The American Psychological Association created the APA citation style in 1929 as a way to help psychologists, anthropologists, and even business managers establish one common way to cite sources and present content.

APA is used when citing sources for academic articles such as journals, and is intended to help readers better comprehend content, and to avoid language bias wherever possible. The APA style (or APA format ) is now in its 7th edition, and provides citation style guides for virtually any type of resource.

Chicago Style Citation Examples

The Chicago/Turabian style of citing sources is generally used when citing sources for humanities papers, and is best known for its requirement that writers place bibliographic citations at the bottom of a page (in Chicago-format footnotes ) or at the end of a paper (endnotes).

The Turabian and Chicago citation styles are almost identical, but the Turabian style is geared towards student published papers such as theses and dissertations, while the Chicago style provides guidelines for all types of publications. This is why you’ll commonly see Chicago style and Turabian style presented together. The Chicago Manual of Style is currently in its 17th edition, and Turabian’s A Manual for Writers of Research Papers, Theses, and Dissertations is in its 8th edition.

Citing Specific Sources or Events

- Declaration of Independence

- Gettysburg Address

- Martin Luther King Jr. Speech

- President Obama’s Farewell Address

- President Trump’s Inauguration Speech

- White House Press Briefing

Additional FAQs

- Citing Archived Contributors

- Citing a Blog

- Citing a Book Chapter

- Citing a Source in a Foreign Language

- Citing an Image

- Citing a Song

- Citing Special Contributors

- Citing a Translated Article

- Citing a Tweet

6 Interesting Citation Facts

The world of citations may seem cut and dry, but there’s more to them than just specific capitalization rules, MLA in-text citations , and other formatting specifications. Citations have been helping researches document their sources for hundreds of years, and are a great way to learn more about a particular subject area.

Ever wonder what sets all the different styles apart, or how they came to be in the first place? Read on for some interesting facts about citations!

1. There are Over 7,000 Different Citation Styles

You may be familiar with MLA and APA citation styles, but there are actually thousands of citation styles used for all different academic disciplines all across the world. Deciding which one to use can be difficult, so be sure to ask you instructor which one you should be using for your next paper.

2. Some Citation Styles are Named After People

While a majority of citation styles are named for the specific organizations that publish them (i.e. APA is published by the American Psychological Association, and MLA format is named for the Modern Language Association), some are actually named after individuals. The most well-known example of this is perhaps Turabian style, named for Kate L. Turabian, an American educator and writer. She developed this style as a condensed version of the Chicago Manual of Style in order to present a more concise set of rules to students.

3. There are Some Really Specific and Uniquely Named Citation Styles

How specific can citation styles get? The answer is very. For example, the “Flavour and Fragrance Journal” style is based on a bimonthly, peer-reviewed scientific journal published since 1985 by John Wiley & Sons. It publishes original research articles, reviews and special reports on all aspects of flavor and fragrance. Another example is “Nordic Pulp and Paper Research,” a style used by an international scientific magazine covering science and technology for the areas of wood or bio-mass constituents.

4. More citations were created on EasyBib.com in the first quarter of 2018 than there are people in California.

The US Census Bureau estimates that approximately 39.5 million people live in the state of California. Meanwhile, about 43 million citations were made on EasyBib from January to March of 2018. That’s a lot of citations.

5. “Citations” is a Word With a Long History

The word “citations” can be traced back literally thousands of years to the Latin word “citare” meaning “to summon, urge, call; put in sudden motion, call forward; rouse, excite.” The word then took on its more modern meaning and relevance to writing papers in the 1600s, where it became known as the “act of citing or quoting a passage from a book, etc.”

6. Citation Styles are Always Changing

The concept of citations always stays the same. It is a means of preventing plagiarism and demonstrating where you relied on outside sources. The specific style rules, however, can and do change regularly. For example, in 2018 alone, 46 new citation styles were introduced , and 106 updates were made to exiting styles. At EasyBib, we are always on the lookout for ways to improve our styles and opportunities to add new ones to our list.

Why Citations Matter

Here are the ways accurate citations can help your students achieve academic success, and how you can answer the dreaded question, “why should I cite my sources?”

They Give Credit to the Right People

Citing their sources makes sure that the reader can differentiate the student’s original thoughts from those of other researchers. Not only does this make sure that the sources they use receive proper credit for their work, it ensures that the student receives deserved recognition for their unique contributions to the topic. Whether the student is citing in MLA format , APA format , or any other style, citations serve as a natural way to place a student’s work in the broader context of the subject area, and serve as an easy way to gauge their commitment to the project.

They Provide Hard Evidence of Ideas

Having many citations from a wide variety of sources related to their idea means that the student is working on a well-researched and respected subject. Citing sources that back up their claim creates room for fact-checking and further research . And, if they can cite a few sources that have the converse opinion or idea, and then demonstrate to the reader why they believe that that viewpoint is wrong by again citing credible sources, the student is well on their way to winning over the reader and cementing their point of view.

They Promote Originality and Prevent Plagiarism

The point of research projects is not to regurgitate information that can already be found elsewhere. We have Google for that! What the student’s project should aim to do is promote an original idea or a spin on an existing idea, and use reliable sources to promote that idea. Copying or directly referencing a source without proper citation can lead to not only a poor grade, but accusations of academic dishonesty. By citing their sources regularly and accurately, students can easily avoid the trap of plagiarism , and promote further research on their topic.

They Create Better Researchers

By researching sources to back up and promote their ideas, students are becoming better researchers without even knowing it! Each time a new source is read or researched, the student is becoming more engaged with the project and is developing a deeper understanding of the subject area. Proper citations demonstrate a breadth of the student’s reading and dedication to the project itself. By creating citations, students are compelled to make connections between their sources and discern research patterns. Each time they complete this process, they are helping themselves become better researchers and writers overall.

When is the Right Time to Start Making Citations?

Make in-text/parenthetical citations as you need them.

As you are writing your paper, be sure to include references within the text that correspond with references in a works cited or bibliography. These are usually called in-text citations or parenthetical citations in MLA and APA formats. The most effective time to complete these is directly after you have made your reference to another source. For instance, after writing the line from Charles Dickens’ A Tale of Two Cities : “It was the best of times, it was the worst of times…,” you would include a citation like this (depending on your chosen citation style):

(Dickens 11).

This signals to the reader that you have referenced an outside source. What’s great about this system is that the in-text citations serve as a natural list for all of the citations you have made in your paper, which will make completing the works cited page a whole lot easier. After you are done writing, all that will be left for you to do is scan your paper for these references, and then build a works cited page that includes a citation for each one.

Need help creating an MLA works cited page ? Try the MLA format generator on EasyBib.com! We also have a guide on how to format an APA reference page .

2. Understand the General Formatting Rules of Your Citation Style Before You Start Writing

While reading up on paper formatting may not sound exciting, being aware of how your paper should look early on in the paper writing process is super important. Citation styles can dictate more than just the appearance of the citations themselves, but rather can impact the layout of your paper as a whole, with specific guidelines concerning margin width, title treatment, and even font size and spacing. Knowing how to organize your paper before you start writing will ensure that you do not receive a low grade for something as trivial as forgetting a hanging indent.

Don’t know where to start? Here’s a formatting guide on APA format .

3. Double-check All of Your Outside Sources for Relevance and Trustworthiness First

Collecting outside sources that support your research and specific topic is a critical step in writing an effective paper. But before you run to the library and grab the first 20 books you can lay your hands on, keep in mind that selecting a source to include in your paper should not be taken lightly. Before you proceed with using it to backup your ideas, run a quick Internet search for it and see if other scholars in your field have written about it as well. Check to see if there are book reviews about it or peer accolades. If you spot something that seems off to you, you may want to consider leaving it out of your work. Doing this before your start making citations can save you a ton of time in the long run.

Finished with your paper? It may be time to run it through a grammar and plagiarism checker , like the one offered by EasyBib Plus. If you’re just looking to brush up on the basics, our grammar guides are ready anytime you are.

How useful was this post?

Click on a star to rate it!

We are sorry that this post was not useful for you!

Let us improve this post!

Tell us how we can improve this post?

Citation Basics

Harvard Referencing

Plagiarism Basics

Plagiarism Checker

Upload a paper to check for plagiarism against billions of sources and get advanced writing suggestions for clarity and style.

Get Started

- Directories

- What are citations and why should I use them?

- When should I use a citation?

- Why are there so many citation styles?

Which citation style should I use?

- Chicago Notes Style

- Chicago Author-Date Style

- AMA Style (medicine)

- Bluebook (law)

- Additional Citation Styles

- Built-in Citation Tools

- Quick Citation Generators

- Citation Management Software

- Start Your Research

- Research Guides

- University of Washington Libraries

- Library Guides

- UW Libraries

- Citing Sources

Citing Sources: Which citation style should I use?

The citation style you choose will largely be dictated by the discipline in which you're writing. For many assignments your instructor will suggest or require a certain style. If you're not sure which one to use, it's always best to check with your instructor or, if you are submitting a manuscript, the publisher to see if they require a certain style. In many cases, you may not be required to use any particular style as long as you pick one and use it consistently. If you have some flexibility, use the guide below to help you decide.

Disciplinary Citation Styles

- Social Sciences

- Sciences & Medicine

- Engineering

When in doubt, try: Chicago Notes

- Architecture & Landscape Architecture → try Chicago Notes or Chicago Author-Date

- Art → try Chicago Notes

- Art History → use Chicago Notes

- Dance → try Chicago Notes or MLA

- Drama → try Chicago Notes or MLA

- Ethnomusicology → try Chicago Notes

- Music → try Chicago Notes

- Music History → use Chicago Notes

- Urban Design & Planning → try Chicago Notes or Chicago Author-Date

When in doubt, try: MLA

- Cinema Studies → try MLA

- Classics → try Chicago Notes

- English → use MLA

- History → use Chicago Notes

- Linguistics → try MLA

- Languages → try MLA

- Literatures → use MLA

- Philosophy → try MLA

- Religion → try Chicago Notes

When in doubt, try: APA or Chicago Notes

- Anthropology → try Chicago Author-Date

- Business → try APA (see also Citing Business Information from Foster Library)

- Communication → try APA

- Criminology & Criminal Justice → try Chicago Author-Date

- Economics → try APA

- Education → try APA

- Geography → try APA

- Government & Law (for non-law students) → try Chicago Notes

- History → try Chicago Notes

- Informatics → try APA

- Law (for law students) → use Bluebook

- Library & Information Science → try APA

- Museology → try Chicago Notes

- Political Science → try Chicago Notes

- Psychology → use APA

- Social Work → try APA

- Sociology → use ASA or Chicago Author-Date

When in doubt, try: CSE Name-Year or CSE Citation-Sequence

- Aquatic & Fisheries Sciences → try CSE Name-Year or APA

- Astronomy → try AIP or CSE Citation-Sequence

- Biology & Life Sciences → try CSE Name-Year or APA

- Chemistry → try ACS

- Earth & Space Sciences → try CSE Name-Year or APA

- Environmental Sciences → try CSE Name-Year or APA

- Forest Sciences → try CSE Name-Year or APA

- Health Sciences: Public Health, Medicine, & Nursing → use AMA or NLM

- Marine Sciences → try CSE Name-Year or APA

- Mathematics → try AMS or CSE Citation-Sequence

- Oceanography → try CSE Name-Year or APA

- Physics → try AIP or CSE Citation-Sequence

- Psychology → use APA

When in doubt, try: CSE Name-Year or IEEE

- Aeronautics and Astronautics → try CSE Citation-Sequence

- Bioengineering → try AMA or NLM

- Chemical Engineering → try ACS

- Civil and Environmental Engineering → try CSE Name-Year

- Computational Linguistics → try CSE Citation-Sequence

- Computer Science & Engineering → try IEEE

- Electrical and Computer Engineering → try IEEE

- Engineering (general) → try IEEE or CSE Name-Year

- Human Centered Design & Engineering → try IEEE

- Human-Computer Interaction + Design → try IEEE

- Industrial and Systems Engineering → try CSE Name-Yea r

- Mechanical Engineering → try Chicago Notes or Chicago Author-Date

See also: Additional Citation Styles , for styles used by specific engineering associations.

Pro Tip: Citation Tools Save Time & Stress!

If you’re enrolled in classes that each require a different citation style, it can get confusing really fast! The tools on the Quick Citation Generators section can help you format citations quickly in many different styles.

- << Previous: Why are there so many citation styles?

- Next: Citation Style Guides >>

- Last Updated: May 1, 2024 12:48 PM

- URL: https://guides.lib.uw.edu/research/citations

Have a language expert improve your writing

Run a free plagiarism check in 10 minutes, automatically generate references for free.

- Knowledge Base

- Referencing

A Quick Guide to Harvard Referencing | Citation Examples

Published on 14 February 2020 by Jack Caulfield . Revised on 15 September 2023.

Referencing is an important part of academic writing. It tells your readers what sources you’ve used and how to find them.

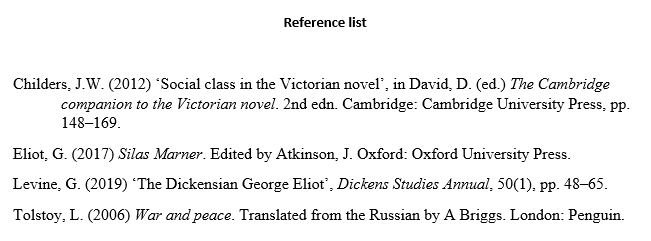

Harvard is the most common referencing style used in UK universities. In Harvard style, the author and year are cited in-text, and full details of the source are given in a reference list .

| In-text citation | Referencing is an essential academic skill (Pears and Shields, 2019). |

| Reference list entry | Pears, R. and Shields, G. (2019) 11th edn. London: MacMillan. |

Harvard Reference Generator

Instantly correct all language mistakes in your text

Be assured that you'll submit flawless writing. Upload your document to correct all your mistakes.

Table of contents

Harvard in-text citation, creating a harvard reference list, harvard referencing examples, referencing sources with no author or date, frequently asked questions about harvard referencing.

A Harvard in-text citation appears in brackets beside any quotation or paraphrase of a source. It gives the last name of the author(s) and the year of publication, as well as a page number or range locating the passage referenced, if applicable:

Note that ‘p.’ is used for a single page, ‘pp.’ for multiple pages (e.g. ‘pp. 1–5’).

An in-text citation usually appears immediately after the quotation or paraphrase in question. It may also appear at the end of the relevant sentence, as long as it’s clear what it refers to.

When your sentence already mentions the name of the author, it should not be repeated in the citation:

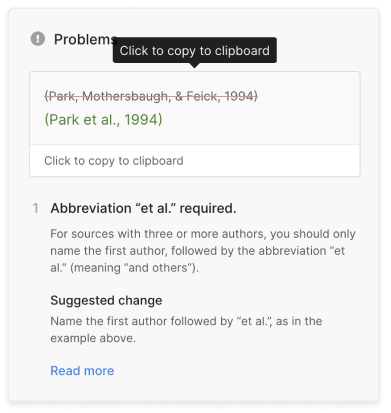

Sources with multiple authors

When you cite a source with up to three authors, cite all authors’ names. For four or more authors, list only the first name, followed by ‘ et al. ’:

| Number of authors | In-text citation example |

|---|---|

| 1 author | (Davis, 2019) |

| 2 authors | (Davis and Barrett, 2019) |

| 3 authors | (Davis, Barrett and McLachlan, 2019) |

| 4+ authors | (Davis , 2019) |

Sources with no page numbers

Some sources, such as websites , often don’t have page numbers. If the source is a short text, you can simply leave out the page number. With longer sources, you can use an alternate locator such as a subheading or paragraph number if you need to specify where to find the quote:

Multiple citations at the same point

When you need multiple citations to appear at the same point in your text – for example, when you refer to several sources with one phrase – you can present them in the same set of brackets, separated by semicolons. List them in order of publication date:

Multiple sources with the same author and date

If you cite multiple sources by the same author which were published in the same year, it’s important to distinguish between them in your citations. To do this, insert an ‘a’ after the year in the first one you reference, a ‘b’ in the second, and so on:

Prevent plagiarism, run a free check.

A bibliography or reference list appears at the end of your text. It lists all your sources in alphabetical order by the author’s last name, giving complete information so that the reader can look them up if necessary.

The reference entry starts with the author’s last name followed by initial(s). Only the first word of the title is capitalised (as well as any proper nouns).

Sources with multiple authors in the reference list

As with in-text citations, up to three authors should be listed; when there are four or more, list only the first author followed by ‘ et al. ’:

| Number of authors | Reference example |

|---|---|

| 1 author | Davis, V. (2019) … |

| 2 authors | Davis, V. and Barrett, M. (2019) … |

| 3 authors | Davis, V., Barrett, M. and McLachlan, F. (2019) … |

| 4+ authors | Davis, V. (2019) … |

Reference list entries vary according to source type, since different information is relevant for different sources. Formats and examples for the most commonly used source types are given below.

- Entire book

- Book chapter

- Translated book

- Edition of a book

| Format | Author surname, initial. (Year) . City: Publisher. |

| Example | Smith, Z. (2017) . London: Penguin. |

| Notes |

| Format | Author surname, initial. (Year) ‘Chapter title’, in Editor name (ed(s).) . City: Publisher, page range. |

| Example | Greenblatt, S. (2010) ‘The traces of Shakespeare’s life’, in De Grazia, M. and Wells, S. (eds.) . Cambridge: Cambridge University Press, pp. 1–14. |

| Notes |

| Format | Author surname, initial. (Year) . Translated from the [language] by Translator name. City: Publisher. |

| Example | Tokarczuk, O. (2019) . Translated from the Polish by A. Lloyd-Jones. London: Fitzcarraldo. |

| Notes |

| Format | Author surname, initial. (Year) . Edition. City: Publisher. |

| Example | Danielson, D. (ed.) (1999) . 2nd edn. Cambridge: Cambridge University Press. |

| Notes |

Journal articles

- Print journal

- Online-only journal with DOI

- Online-only journal with no DOI

| Format | Author surname, initial. (Year) ‘Article title’, , Volume(Issue), pp. page range. |

| Example | Thagard, P. (1990) ‘Philosophy and machine learning’, , 20(2), pp. 261–276. |

| Notes |

| Format | Author surname, initial. (Year) ‘Article title’, , Volume(Issue), page range. DOI. |

| Example | Adamson, P. (2019) ‘American history at the foreign office: Exporting the silent epic Western’, , 31(2), pp. 32–59. doi: https://10.2979/filmhistory.31.2.02. |

| Notes | if available. |

| Format | Author surname, initial. (Year) ‘Article title’, , Volume(Issue), page range. Available at: URL (Accessed: Day Month Year). |

| Example | Theroux, A. (1990) ‘Henry James’s Boston’, , 20(2), pp. 158–165. Available at: https://www.jstor.org/stable/20153016 (Accessed: 13 February 2020). |

| Notes |

- General web page

- Online article or blog

- Social media post

| Format | Author surname, initial. (Year) . Available at: URL (Accessed: Day Month Year). |

| Example | Google (2019) . Available at: https://policies.google.com/terms?hl=en-US (Accessed: 27 January 2020). |

| Notes |

| Format | Author surname, initial. (Year) ‘Article title’, , Date. Available at: URL (Accessed: Day Month Year). |

| Example | Leafstedt, E. (2020) ‘Russia’s constitutional reform and Putin’s plans for a legacy of stability’, , 29 January. Available at: https://blog.politics.ox.ac.uk/russias-constitutional-reform-and-putins-plans-for-a-legacy-of-stability/ (Accessed: 13 February 2020). |

| Notes |

| Format | Author surname, initial. [username] (Year) or text [Website name] Date. Available at: URL (Accessed: Day Month Year). |

| Example | Dorsey, J. [@jack] (2018) We’re committing Twitter to help increase the collective health, openness, and civility of public conversation … [Twitter] 1 March. Available at: https://twitter.com/jack/status/969234275420655616 (Accessed: 13 February 2020). |

| Notes |

Sometimes you won’t have all the information you need for a reference. This section covers what to do when a source lacks a publication date or named author.

No publication date

When a source doesn’t have a clear publication date – for example, a constantly updated reference source like Wikipedia or an obscure historical document which can’t be accurately dated – you can replace it with the words ‘no date’:

| In-text citation | (Scribbr, no date) |

| Reference list entry | Scribbr (no date) . Available at: https://www.scribbr.co.uk/category/thesis-dissertation/ (Accessed: 14 February 2020). |

Note that when you do this with an online source, you should still include an access date, as in the example.

When a source lacks a clearly identified author, there’s often an appropriate corporate source – the organisation responsible for the source – whom you can credit as author instead, as in the Google and Wikipedia examples above.

When that’s not the case, you can just replace it with the title of the source in both the in-text citation and the reference list:

| In-text citation | (‘Divest’, no date) |

| Reference list entry | ‘Divest’ (no date) Available at: https://www.merriam-webster.com/dictionary/divest (Accessed: 27 January 2020). |

The only proofreading tool specialized in correcting academic writing

The academic proofreading tool has been trained on 1000s of academic texts and by native English editors. Making it the most accurate and reliable proofreading tool for students.

Correct my document today

Harvard referencing uses an author–date system. Sources are cited by the author’s last name and the publication year in brackets. Each Harvard in-text citation corresponds to an entry in the alphabetised reference list at the end of the paper.

Vancouver referencing uses a numerical system. Sources are cited by a number in parentheses or superscript. Each number corresponds to a full reference at the end of the paper.

| Harvard style | Vancouver style | |

|---|---|---|

| In-text citation | Each referencing style has different rules (Pears and Shields, 2019). | Each referencing style has different rules (1). |

| Reference list | Pears, R. and Shields, G. (2019). . 11th edn. London: MacMillan. | 1. Pears R, Shields G. Cite them right: The essential referencing guide. 11th ed. London: MacMillan; 2019. |

A Harvard in-text citation should appear in brackets every time you quote, paraphrase, or refer to information from a source.

The citation can appear immediately after the quotation or paraphrase, or at the end of the sentence. If you’re quoting, place the citation outside of the quotation marks but before any other punctuation like a comma or full stop.

In Harvard referencing, up to three author names are included in an in-text citation or reference list entry. When there are four or more authors, include only the first, followed by ‘ et al. ’

| In-text citation | Reference list | |

|---|---|---|

| 1 author | (Smith, 2014) | Smith, T. (2014) … |

| 2 authors | (Smith and Jones, 2014) | Smith, T. and Jones, F. (2014) … |

| 3 authors | (Smith, Jones and Davies, 2014) | Smith, T., Jones, F. and Davies, S. (2014) … |

| 4+ authors | (Smith , 2014) | Smith, T. (2014) … |

Though the terms are sometimes used interchangeably, there is a difference in meaning:

- A reference list only includes sources cited in the text – every entry corresponds to an in-text citation .

- A bibliography also includes other sources which were consulted during the research but not cited.

Cite this Scribbr article

If you want to cite this source, you can copy and paste the citation or click the ‘Cite this Scribbr article’ button to automatically add the citation to our free Reference Generator.

Caulfield, J. (2023, September 15). A Quick Guide to Harvard Referencing | Citation Examples. Scribbr. Retrieved 24 June 2024, from https://www.scribbr.co.uk/referencing/harvard-style/

Is this article helpful?

Jack Caulfield

Other students also liked, harvard in-text citation | a complete guide & examples, harvard style bibliography | format & examples, referencing books in harvard style | templates & examples, scribbr apa citation checker.

An innovative new tool that checks your APA citations with AI software. Say goodbye to inaccurate citations!

- Privacy Policy

Home » How to Cite Research Paper – All Formats and Examples

How to Cite Research Paper – All Formats and Examples

Table of Contents

Research Paper Citation

Research paper citation refers to the act of acknowledging and referencing a previously published work in a scholarly or academic paper . When citing sources, researchers provide information that allows readers to locate the original source, validate the claims or arguments made in the paper, and give credit to the original author(s) for their work.

The citation may include the author’s name, title of the publication, year of publication, publisher, and other relevant details that allow readers to trace the source of the information. Proper citation is a crucial component of academic writing, as it helps to ensure accuracy, credibility, and transparency in research.

How to Cite Research Paper

There are several formats that are used to cite a research paper. Follow the guide for the Citation of a Research Paper:

Last Name, First Name. Title of Book. Publisher, Year of Publication.

Example : Smith, John. The History of the World. Penguin Press, 2010.

Journal Article

Last Name, First Name. “Title of Article.” Title of Journal, vol. Volume Number, no. Issue Number, Year of Publication, pp. Page Numbers.

Example : Johnson, Emma. “The Effects of Climate Change on Agriculture.” Environmental Science Journal, vol. 10, no. 2, 2019, pp. 45-59.

Research Paper

Last Name, First Name. “Title of Paper.” Conference Name, Location, Date of Conference.

Example : Garcia, Maria. “The Importance of Early Childhood Education.” International Conference on Education, Paris, 5-7 June 2018.

Author’s Last Name, First Name. “Title of Webpage.” Website Title, Publisher, Date of Publication, URL.

Example : Smith, John. “The Benefits of Exercise.” Healthline, Healthline Media, 1 March 2022, https://www.healthline.com/health/benefits-of-exercise.

News Article

Last Name, First Name. “Title of Article.” Name of Newspaper, Date of Publication, URL.

Example : Robinson, Sarah. “Biden Announces New Climate Change Policies.” The New York Times, 22 Jan. 2021, https://www.nytimes.com/2021/01/22/climate/biden-climate-change-policies.html.

Author, A. A. (Year of publication). Title of book. Publisher.

Example: Smith, J. (2010). The History of the World. Penguin Press.

Author, A. A., Author, B. B., & Author, C. C. (Year of publication). Title of article. Title of Journal, volume number(issue number), page range.

Example: Johnson, E., Smith, K., & Lee, M. (2019). The Effects of Climate Change on Agriculture. Environmental Science Journal, 10(2), 45-59.

Author, A. A. (Year of publication). Title of paper. In Editor First Initial. Last Name (Ed.), Title of Conference Proceedings (page numbers). Publisher.

Example: Garcia, M. (2018). The Importance of Early Childhood Education. In J. Smith (Ed.), Proceedings from the International Conference on Education (pp. 60-75). Springer.

Author, A. A. (Year, Month Day of publication). Title of webpage. Website name. URL

Example: Smith, J. (2022, March 1). The Benefits of Exercise. Healthline. https://www.healthline.com/health/benefits-of-exercise

Author, A. A. (Year, Month Day of publication). Title of article. Newspaper name. URL.

Example: Robinson, S. (2021, January 22). Biden Announces New Climate Change Policies. The New York Times. https://www.nytimes.com/2021/01/22/climate/biden-climate-change-policies.html

Chicago/Turabian style

Please note that there are two main variations of the Chicago style: the author-date system and the notes and bibliography system. I will provide examples for both systems below.

Author-Date system:

- In-text citation: (Author Last Name Year, Page Number)

- Reference list: Author Last Name, First Name. Year. Title of Book. Place of publication: Publisher.

- In-text citation: (Smith 2005, 28)

- Reference list: Smith, John. 2005. The History of America. New York: Penguin Press.

Notes and Bibliography system:

- Footnote/Endnote citation: Author First Name Last Name, Title of Book (Place of publication: Publisher, Year), Page Number.

- Bibliography citation: Author Last Name, First Name. Title of Book. Place of publication: Publisher, Year.

- Footnote/Endnote citation: John Smith, The History of America (New York: Penguin Press, 2005), 28.

- Bibliography citation: Smith, John. The History of America. New York: Penguin Press, 2005.

JOURNAL ARTICLES:

- Reference list: Author Last Name, First Name. Year. “Article Title.” Journal Title Volume Number (Issue Number): Page Range.

- In-text citation: (Johnson 2010, 45)

- Reference list: Johnson, Mary. 2010. “The Impact of Social Media on Society.” Journal of Communication 60(2): 39-56.

- Footnote/Endnote citation: Author First Name Last Name, “Article Title,” Journal Title Volume Number, Issue Number (Year): Page Range.

- Bibliography citation: Author Last Name, First Name. “Article Title.” Journal Title Volume Number, Issue Number (Year): Page Range.

- Footnote/Endnote citation: Mary Johnson, “The Impact of Social Media on Society,” Journal of Communication 60, no. 2 (2010): 39-56.

- Bibliography citation: Johnson, Mary. “The Impact of Social Media on Society.” Journal of Communication 60, no. 2 (2010): 39-56.

RESEARCH PAPERS:

- Reference list: Author Last Name, First Name. Year. “Title of Paper.” Conference Proceedings Title, Location, Date. Publisher, Page Range.

- In-text citation: (Jones 2015, 12)

- Reference list: Jones, David. 2015. “The Effects of Climate Change on Agriculture.” Proceedings of the International Conference on Climate Change, Paris, France, June 1-3, 2015. Springer, 10-20.

- Footnote/Endnote citation: Author First Name Last Name, “Title of Paper,” Conference Proceedings Title, Location, Date (Place of publication: Publisher, Year), Page Range.

- Bibliography citation: Author Last Name, First Name. “Title of Paper.” Conference Proceedings Title, Location, Date. Place of publication: Publisher, Year.

- Footnote/Endnote citation: David Jones, “The Effects of Climate Change on Agriculture,” Proceedings of the International Conference on Climate Change, Paris, France, June 1-3, 2015 (New York: Springer, 10-20).

- Bibliography citation: Jones, David. “The Effects of Climate Change on Agriculture.” Proceedings of the International Conference on Climate Change, Paris, France, June 1-3, 2015. New York: Springer, 10-20.

- In-text citation: (Author Last Name Year)

- Reference list: Author Last Name, First Name. Year. “Title of Webpage.” Website Name. URL.

- In-text citation: (Smith 2018)

- Reference list: Smith, John. 2018. “The Importance of Recycling.” Environmental News Network. https://www.enn.com/articles/54374-the-importance-of-recycling.

- Footnote/Endnote citation: Author First Name Last Name, “Title of Webpage,” Website Name, URL (accessed Date).

- Bibliography citation: Author Last Name, First Name. “Title of Webpage.” Website Name. URL (accessed Date).

- Footnote/Endnote citation: John Smith, “The Importance of Recycling,” Environmental News Network, https://www.enn.com/articles/54374-the-importance-of-recycling (accessed April 8, 2023).

- Bibliography citation: Smith, John. “The Importance of Recycling.” Environmental News Network. https://www.enn.com/articles/54374-the-importance-of-recycling (accessed April 8, 2023).

NEWS ARTICLES:

- Reference list: Author Last Name, First Name. Year. “Title of Article.” Name of Newspaper, Month Day.

- In-text citation: (Johnson 2022)

- Reference list: Johnson, Mary. 2022. “New Study Finds Link Between Coffee and Longevity.” The New York Times, January 15.

- Footnote/Endnote citation: Author First Name Last Name, “Title of Article,” Name of Newspaper (City), Month Day, Year.

- Bibliography citation: Author Last Name, First Name. “Title of Article.” Name of Newspaper (City), Month Day, Year.

- Footnote/Endnote citation: Mary Johnson, “New Study Finds Link Between Coffee and Longevity,” The New York Times (New York), January 15, 2022.

- Bibliography citation: Johnson, Mary. “New Study Finds Link Between Coffee and Longevity.” The New York Times (New York), January 15, 2022.

Harvard referencing style

Format: Author’s Last name, First initial. (Year of publication). Title of book. Publisher.

Example: Smith, J. (2008). The Art of War. Random House.

Journal article:

Format: Author’s Last name, First initial. (Year of publication). Title of article. Title of journal, volume number(issue number), page range.

Example: Brown, M. (2012). The impact of social media on business communication. Harvard Business Review, 90(12), 85-92.

Research paper:

Format: Author’s Last name, First initial. (Year of publication). Title of paper. In Editor’s First initial. Last name (Ed.), Title of book (page range). Publisher.

Example: Johnson, R. (2015). The effects of climate change on agriculture. In S. Lee (Ed.), Climate Change and Sustainable Development (pp. 45-62). Springer.

Format: Author’s Last name, First initial. (Year, Month Day of publication). Title of page. Website name. URL.

Example: Smith, J. (2017, May 23). The history of the internet. Encyclopedia Britannica. https://www.britannica.com/topic/history-of-the-internet

News article:

Format: Author’s Last name, First initial. (Year, Month Day of publication). Title of article. Title of newspaper, page number (if applicable).

Example: Thompson, E. (2022, January 5). New study finds coffee may lower risk of dementia. The New York Times, A1.

IEEE Format

Author(s). (Year of Publication). Title of Book. Publisher.

Smith, J. K. (2015). The Power of Habit: Why We Do What We Do in Life and Business. Random House.

Journal Article:

Author(s). (Year of Publication). Title of Article. Title of Journal, Volume Number (Issue Number), page numbers.

Johnson, T. J., & Kaye, B. K. (2016). Interactivity and the Future of Journalism. Journalism Studies, 17(2), 228-246.

Author(s). (Year of Publication). Title of Paper. Paper presented at Conference Name, Location.

Jones, L. K., & Brown, M. A. (2018). The Role of Social Media in Political Campaigns. Paper presented at the 2018 International Conference on Social Media and Society, Copenhagen, Denmark.

- Website: Author(s) or Organization Name. (Year of Publication or Last Update). Title of Webpage. Website Name. URL.

Example: National Aeronautics and Space Administration. (2019, August 29). NASA’s Mission to Mars. NASA. https://www.nasa.gov/topics/journeytomars/index.html

- News Article: Author(s). (Year of Publication). Title of Article. Name of News Source. URL.

Example: Johnson, M. (2022, February 16). Climate Change: Is it Too Late to Save the Planet? CNN. https://www.cnn.com/2022/02/16/world/climate-change-planet-scn/index.html

Vancouver Style

In-text citation: Use superscript numbers to cite sources in the text, e.g., “The study conducted by Smith and Johnson^1 found that…”.

Reference list citation: Format: Author(s). Title of book. Edition if any. Place of publication: Publisher; Year of publication.

Example: Smith J, Johnson L. Introduction to Molecular Biology. 2nd ed. New York: Wiley-Blackwell; 2015.

In-text citation: Use superscript numbers to cite sources in the text, e.g., “Several studies have reported that^1,2,3…”.

Reference list citation: Format: Author(s). Title of article. Abbreviated name of journal. Year of publication; Volume number (Issue number): Page range.

Example: Jones S, Patel K, Smith J. The effects of exercise on cardiovascular health. J Cardiol. 2018; 25(2): 78-84.

In-text citation: Use superscript numbers to cite sources in the text, e.g., “Previous research has shown that^1,2,3…”.

Reference list citation: Format: Author(s). Title of paper. In: Editor(s). Title of the conference proceedings. Place of publication: Publisher; Year of publication. Page range.

Example: Johnson L, Smith J. The role of stem cells in tissue regeneration. In: Patel S, ed. Proceedings of the 5th International Conference on Regenerative Medicine. London: Academic Press; 2016. p. 68-73.

In-text citation: Use superscript numbers to cite sources in the text, e.g., “According to the World Health Organization^1…”.

Reference list citation: Format: Author(s). Title of webpage. Name of website. URL [Accessed Date].

Example: World Health Organization. Coronavirus disease (COVID-19) advice for the public. World Health Organization. https://www.who.int/emergencies/disease/novel-coronavirus-2019/advice-for-public [Accessed 3 March 2023].

In-text citation: Use superscript numbers to cite sources in the text, e.g., “According to the New York Times^1…”.

Reference list citation: Format: Author(s). Title of article. Name of newspaper. Year Month Day; Section (if any): Page number.

Example: Jones S. Study shows that sleep is essential for good health. The New York Times. 2022 Jan 12; Health: A8.

Author(s). Title of Book. Edition Number (if it is not the first edition). Publisher: Place of publication, Year of publication.

Example: Smith, J. Chemistry of Natural Products. 3rd ed.; CRC Press: Boca Raton, FL, 2015.

Journal articles:

Author(s). Article Title. Journal Name Year, Volume, Inclusive Pagination.

Example: Garcia, A. M.; Jones, B. A.; Smith, J. R. Selective Synthesis of Alkenes from Alkynes via Catalytic Hydrogenation. J. Am. Chem. Soc. 2019, 141, 10754-10759.

Research papers:

Author(s). Title of Paper. Journal Name Year, Volume, Inclusive Pagination.

Example: Brown, H. D.; Jackson, C. D.; Patel, S. D. A New Approach to Photovoltaic Solar Cells. J. Mater. Chem. 2018, 26, 134-142.

Author(s) (if available). Title of Webpage. Name of Website. URL (accessed Month Day, Year).

Example: National Institutes of Health. Heart Disease and Stroke. National Heart, Lung, and Blood Institute. https://www.nhlbi.nih.gov/health-topics/heart-disease-and-stroke (accessed April 7, 2023).

News articles:

Author(s). Title of Article. Name of News Publication. Date of Publication. URL (accessed Month Day, Year).

Example: Friedman, T. L. The World is Flat. New York Times. April 7, 2023. https://www.nytimes.com/2023/04/07/opinion/world-flat-globalization.html (accessed April 7, 2023).

In AMA Style Format, the citation for a book should include the following information, in this order:

- Title of book (in italics)

- Edition (if applicable)

- Place of publication

- Year of publication

Lodish H, Berk A, Zipursky SL, et al. Molecular Cell Biology. 4th ed. New York, NY: W. H. Freeman; 2000.

In AMA Style Format, the citation for a journal article should include the following information, in this order:

- Title of article

- Abbreviated title of journal (in italics)

- Year of publication; volume number(issue number):page numbers.

Chen H, Huang Y, Li Y, et al. Effects of mindfulness-based stress reduction on depression in adolescents and young adults: a systematic review and meta-analysis. JAMA Netw Open. 2020;3(6):e207081. doi:10.1001/jamanetworkopen.2020.7081

In AMA Style Format, the citation for a research paper should include the following information, in this order:

- Title of paper

- Name of journal or conference proceeding (in italics)

- Volume number(issue number):page numbers.

Bredenoord AL, Kroes HY, Cuppen E, Parker M, van Delden JJ. Disclosure of individual genetic data to research participants: the debate reconsidered. Trends Genet. 2011;27(2):41-47. doi:10.1016/j.tig.2010.11.004

In AMA Style Format, the citation for a website should include the following information, in this order:

- Title of web page or article

- Name of website (in italics)

- Date of publication or last update (if available)

- URL (website address)

- Date of access (month day, year)

Centers for Disease Control and Prevention. How to protect yourself and others. CDC. Published February 11, 2022. Accessed February 14, 2022. https://www.cdc.gov/coronavirus/2019-ncov/prevent-getting-sick/prevention.html

In AMA Style Format, the citation for a news article should include the following information, in this order:

- Name of newspaper or news website (in italics)

- Date of publication

Gorman J. Scientists use stem cells from frogs to build first living robots. The New York Times. January 13, 2020. Accessed January 14, 2020. https://www.nytimes.com/2020/01/13/science/living-robots-xenobots.html

Bluebook Format

One author: Daniel J. Solove, The Future of Reputation: Gossip, Rumor, and Privacy on the Internet (Yale University Press 2007).

Two or more authors: Martha Nussbaum and Saul Levmore, eds., The Offensive Internet: Speech, Privacy, and Reputation (Harvard University Press 2010).

Journal article

One author: Daniel J. Solove, “A Taxonomy of Privacy,” University of Pennsylvania Law Review 154, no. 3 (January 2006): 477-560.

Two or more authors: Ethan Katsh and Andrea Schneider, “The Emergence of Online Dispute Resolution,” Journal of Dispute Resolution 2003, no. 1 (2003): 7-19.

One author: Daniel J. Solove, “A Taxonomy of Privacy,” GWU Law School Public Law Research Paper No. 113, 2005.

Two or more authors: Ethan Katsh and Andrea Schneider, “The Emergence of Online Dispute Resolution,” Cyberlaw Research Paper Series Paper No. 00-5, 2000.

WebsiteElectronic Frontier Foundation, “Surveillance Self-Defense,” accessed April 8, 2023, https://ssd.eff.org/.

News article

One author: Mark Sherman, “Court Deals Major Blow to Net Neutrality Rules,” ABC News, January 14, 2014, https://abcnews.go.com/Politics/wireStory/court-deals-major-blow-net-neutrality-rules-21586820.

Two or more authors: Siobhan Hughes and Brent Kendall, “AT&T Wins Approval to Buy Time Warner,” Wall Street Journal, June 12, 2018, https://www.wsj.com/articles/at-t-wins-approval-to-buy-time-warner-1528847249.

In-Text Citation: (Author’s last name Year of Publication: Page Number)

Example: (Smith 2010: 35)

Reference List Citation: Author’s last name First Initial. Title of Book. Edition. Place of publication: Publisher; Year of publication.

Example: Smith J. Biology: A Textbook. 2nd ed. New York: Oxford University Press; 2010.

Example: (Johnson 2014: 27)

Reference List Citation: Author’s last name First Initial. Title of Article. Abbreviated Title of Journal. Year of publication;Volume(Issue):Page Numbers.

Example: Johnson S. The role of dopamine in addiction. J Neurosci. 2014;34(8): 2262-2272.

Example: (Brown 2018: 10)

Reference List Citation: Author’s last name First Initial. Title of Paper. Paper presented at: Name of Conference; Date of Conference; Place of Conference.

Example: Brown R. The impact of social media on mental health. Paper presented at: Annual Meeting of the American Psychological Association; August 2018; San Francisco, CA.

Example: (World Health Organization 2020: para. 2)

Reference List Citation: Author’s last name First Initial. Title of Webpage. Name of Website. URL. Published date. Accessed date.

Example: World Health Organization. Coronavirus disease (COVID-19) pandemic. WHO website. https://www.who.int/emergencies/disease-coronavirus-2019. Updated August 17, 2020. Accessed September 5, 2021.

Example: (Smith 2019: para. 5)

Reference List Citation: Author’s last name First Initial. Title of Article. Title of Newspaper or Magazine. Year of publication; Month Day:Page Numbers.

Example: Smith K. New study finds link between exercise and mental health. The New York Times. 2019;May 20: A6.

Purpose of Research Paper Citation

The purpose of citing sources in a research paper is to give credit to the original authors and acknowledge their contribution to your work. By citing sources, you are also demonstrating the validity and reliability of your research by showing that you have consulted credible and authoritative sources. Citations help readers to locate the original sources that you have referenced and to verify the accuracy and credibility of your research. Additionally, citing sources is important for avoiding plagiarism, which is the act of presenting someone else’s work as your own. Proper citation also shows that you have conducted a thorough literature review and have used the existing research to inform your own work. Overall, citing sources is an essential aspect of academic writing and is necessary for building credibility, demonstrating research skills, and avoiding plagiarism.

Advantages of Research Paper Citation

There are several advantages of research paper citation, including:

- Giving credit: By citing the works of other researchers in your field, you are acknowledging their contribution and giving credit where it is due.

- Strengthening your argument: Citing relevant and reliable sources in your research paper can strengthen your argument and increase its credibility. It shows that you have done your due diligence and considered various perspectives before drawing your conclusions.

- Demonstrating familiarity with the literature : By citing various sources, you are demonstrating your familiarity with the existing literature in your field. This is important as it shows that you are well-informed about the topic and have done a thorough review of the available research.

- Providing a roadmap for further research: By citing relevant sources, you are providing a roadmap for further research on the topic. This can be helpful for future researchers who are interested in exploring the same or related issues.

- Building your own reputation: By citing the works of established researchers in your field, you can build your own reputation as a knowledgeable and informed scholar. This can be particularly helpful if you are early in your career and looking to establish yourself as an expert in your field.

About the author

Muhammad Hassan

Researcher, Academic Writer, Web developer

You may also like

Research Paper Conclusion – Writing Guide and...

How to Publish a Research Paper – Step by Step...

Research Paper Format – Types, Examples and...

Theoretical Framework – Types, Examples and...

Research Paper Outline – Types, Example, Template

Research Design – Types, Methods and Examples

- PRO Courses Guides New Tech Help Pro Expert Videos About wikiHow Pro Upgrade Sign In

- EDIT Edit this Article

- EXPLORE Tech Help Pro About Us Random Article Quizzes Request a New Article Community Dashboard This Or That Game Popular Categories Arts and Entertainment Artwork Books Movies Computers and Electronics Computers Phone Skills Technology Hacks Health Men's Health Mental Health Women's Health Relationships Dating Love Relationship Issues Hobbies and Crafts Crafts Drawing Games Education & Communication Communication Skills Personal Development Studying Personal Care and Style Fashion Hair Care Personal Hygiene Youth Personal Care School Stuff Dating All Categories Arts and Entertainment Finance and Business Home and Garden Relationship Quizzes Cars & Other Vehicles Food and Entertaining Personal Care and Style Sports and Fitness Computers and Electronics Health Pets and Animals Travel Education & Communication Hobbies and Crafts Philosophy and Religion Work World Family Life Holidays and Traditions Relationships Youth

- Browse Articles

- Learn Something New

- Quizzes Hot

- This Or That Game

- Train Your Brain

- Explore More

- Support wikiHow

- About wikiHow

- Log in / Sign up

- Education and Communications

- College University and Postgraduate

- Academic Writing

- Research Papers

How to Cite a Research Paper

Last Updated: March 29, 2024 Fact Checked

This article was reviewed by Gerald Posner and by wikiHow staff writer, Jennifer Mueller, JD . Gerald Posner is an Author & Journalist based in Miami, Florida. With over 35 years of experience, he specializes in investigative journalism, nonfiction books, and editorials. He holds a law degree from UC College of the Law, San Francisco, and a BA in Political Science from the University of California-Berkeley. He’s the author of thirteen books, including several New York Times bestsellers, the winner of the Florida Book Award for General Nonfiction, and has been a finalist for the Pulitzer Prize in History. He was also shortlisted for the Best Business Book of 2020 by the Society for Advancing Business Editing and Writing. There are 8 references cited in this article, which can be found at the bottom of the page. This article has been fact-checked, ensuring the accuracy of any cited facts and confirming the authority of its sources. This article has been viewed 417,300 times.

When writing a paper for a research project, you may need to cite a research paper you used as a reference. The basic information included in your citation will be the same across all styles. However, the format in which that information is presented is somewhat different depending on whether you're using American Psychological Association (APA), Modern Language Association (MLA), Chicago, or American Medical Association (AMA) style.

Referencing a Research Paper

- In APA style, cite the paper: Last Name, First Initial. (Year). Title. Publisher.

- In Chicago style, cite the paper: Last Name, First Name. “Title.” Publisher, Year.

- In MLA style, cite the paper: Last Name, First Name. “Title.” Publisher. Year.

Citation Help

- For example: "Kringle, K., & Frost, J."

- For example: "Kringle, K., & Frost, J. (2012)."

- If the date, or any other information, are not available, use the guide at https://blog.apastyle.org/apastyle/2012/05/missing-pieces.html .

- For example: "Kringle, K., & Frost, J. (2012). Red noses, warm hearts: The glowing phenomenon among North Pole reindeer."

- If you found the research paper in a database maintained by a university, corporation, or other organization, include any index number assigned to the paper in parentheses after the title. For example: "Kringle, K., & Frost, J. (2012). Red noses, warm hearts: The glowing phenomenon among North Pole reindeer. (Report No. 1234)."

- For example: "Kringle, K., & Frost, J. (2012). Red noses, warm hearts: The glowing phenomenon among North Pole reindeer. (Report No. 1234). Retrieved from Alaska University Library Archives, December 24, 2017."

- For example: "(Kringle & Frost, 2012)."