- Our Mission

Multiple Intelligences: What Does the Research Say?

Proposed by Howard Gardner in 1983, the theory of multiple intelligences has revolutionized how we understand intelligence. Learn more about the research behind his theory.

Many educators have had the experience of not being able to reach some students until presenting the information in a completely different way or providing new options for student expression. Perhaps it was a student who struggled with writing until the teacher provided the option to create a graphic story, which blossomed into a beautiful and complex narrative. Or maybe it was a student who just couldn't seem to grasp fractions, until he created them by separating oranges into slices.

Because of these kinds of experiences, the theory of multiple intelligences resonates with many educators. It supports what we all know to be true: A one-size-fits-all approach to education will invariably leave some students behind. However, the theory is also often misunderstood, which can lead to it being used interchangeably with learning styles or applying it in ways that can limit student potential. While the theory of multiple intelligences is a powerful way to think about learning, it’s also important to understand the research that supports it.

Howard Gardner's Eight Intelligences

The theory of multiple intelligences challenges the idea of a single IQ, where human beings have one central "computer" where intelligence is housed. Howard Gardner, the Harvard professor who originally proposed the theory, says that there are multiple types of human intelligence, each representing different ways of processing information:

- Verbal-linguistic intelligence refers to an individual's ability to analyze information and produce work that involves oral and written language, such as speeches, books, and emails.

- Logical-mathematical intelligence describes the ability to develop equations and proofs, make calculations, and solve abstract problems.

- Visual-spatial intelligence allows people to comprehend maps and other types of graphical information.

- Musical intelligence enables individuals to produce and make meaning of different types of sound.

- Naturalistic intelligence refers to the ability to identify and distinguish among different types of plants, animals, and weather formations found in the natural world.

- Bodily-kinesthetic intelligence entails using one's own body to create products or solve problems.

- Interpersonal intelligence reflects an ability to recognize and understand other people's moods, desires, motivations, and intentions.

- Intrapersonal intelligence refers to people's ability to recognize and assess those same characteristics within themselves.

The Difference Between Multiple Intelligences and Learning Styles

One common misconception about multiple intelligences is that it means the same thing as learning styles. Instead, multiple intelligences represents different intellectual abilities. Learning styles, according to Howard Gardner, are the ways in which an individual approaches a range of tasks. They have been categorized in a number of different ways -- visual, auditory, and kinesthetic, impulsive and reflective, right brain and left brain, etc. Gardner argues that the idea of learning styles does not contain clear criteria for how one would define a learning style, where the style comes, and how it can be recognized and assessed. He phrases the idea of learning styles as "a hypothesis of how an individual approaches a range of materials."

Everyone has all eight types of the intelligences listed above at varying levels of aptitude -- perhaps even more that are still undiscovered -- and all learning experiences do not have to relate to a person's strongest area of intelligence. For example, if someone is skilled at learning new languages, it doesn’t necessarily mean that they prefer to learn through lectures. Someone with high visual-spatial intelligence, such as a skilled painter, may still benefit from using rhymes to remember information. Learning is fluid and complex, and it’s important to avoid labeling students as one type of learner. As Gardner states, "When one has a thorough understanding of a topic, one can typically think of it in several ways."

What Multiple Intelligences Theory Can Teach Us

While additional research is still needed to determine the best measures for assessing and supporting a range of intelligences in schools, the theory has provided opportunities to broaden definitions of intelligence. As an educator, it is useful to think about the different ways that information can be presented. However, it is critical to not classify students as being specific types of learners nor as having an innate or fixed type of intelligence.

Practices Supported by Research

Having an understanding of different teaching approaches from which we all can learn, as well as a toolbox with a variety of ways to present content to students, is valuable for increasing the accessibility of learning experiences for all students. To develop this toolbox, it is especially important to gather ongoing information about student strengths and challenges as well as their developing interests and activities they dislike. Providing different contexts for students and engaging a variety of their senses -- for example, learning about fractions through musical notes, flower petals, and poetic meter -- is supported by research. Specifically:

- Providing students with multiple ways to access content improves learning (Hattie, 2011).

- Providing students with multiple ways to demonstrate knowledge and skills increases engagement and learning, and provides teachers with more accurate understanding of students' knowledge and skills (Darling-Hammond, 2010).

- Instruction should be informed as much as possible by detailed knowledge about students' specific strengths, needs, and areas for growth (Tomlinson, 2014).

As our insatiable curiosity about the learning process persists and studies continue to evolve, scientific research may emerge that further elaborates on multiple intelligences, learning styles, or perhaps another theory. To learn more about the scientific research on student learning, visit our Brain-Based Learning topic page .

Darling-Hammond, L. (2010). Performance Counts: Assessment Systems that Support High-Quality Learning . Washington, DC: Council of Chief State School Officers.

Hattie, J. (2011). Visible Learning for Teachers: Maximizing Impact on Learning . New York, NY: Routledge.

Tomlinson, C. A. (2014). The Differentiated Classroom: Responding to the Needs of All Learners . Alexandria, VA: ASCD.

Resources From Edutopia

- Are Learning Styles Real - and Useful? , by Todd Finley (2015)

- Assistive Technology: Resource Roundup , by Edutopia Staff (2014)

- How Learning Profiles Can Strengthen Your Teaching , by John McCarthy (2014)

- An Interview with the Father of Multiple Intelligences , by Owen Edwards (2009)

Additional Resources on the Web

- Howard Gardner’s website

- Howard Gardner: ‘Multiple intelligences’ are not ‘learning styles’ (The Washington Post, 2013)

- Books published by Howard Gardner

- Multiple Intelligences Resources (ASCD)

- Project Zero (Harvard Graduate School of Education)

- Multiple Intelligences Research Study (MIRS)

- Multiple Intelligences Lesson Plan (Discovery Education)

- Multiple Intelligences Resources (New Horizons for Learning [NHFL], John Hopkins University)

- Center for Innovative Teaching and Learning

- Instructional Guide

Howard Gardner's Theory of Multiple Intelligences

Many of us are familiar with three broad categories in which people learn: visual learning, auditory learning, and kinesthetic learning. Beyond these three categories, many theories of and approaches toward human learning potential have been established. Among them is the theory of multiple intelligences developed by Howard Gardner, Ph.D., John H. and Elisabeth A. Hobbs Research Professor of Cognition and Education at the Harvard Graduate School of Education at Harvard University. Gardner’s early work in psychology and later in human cognition and human potential led to his development of the initial six intelligences. Today there are nine intelligences, and the possibility of others may eventually expand the list.

Gardner’s Multiple Intelligences Summarized

- Verbal-linguistic intelligence (well-developed verbal skills and sensitivity to the sounds, meanings and rhythms of words)

- Logical-mathematical intelligence (ability to think conceptually and abstractly, and capacity to discern logical and numerical patterns)

- Spatial-visual intelligence (capacity to think in images and pictures, to visualize accurately and abstractly)

- Bodily-kinesthetic intelligence (ability to control one’s body movements and to handle objects skillfully)

- Musical intelligences (ability to produce and appreciate rhythm, pitch and timber)

- Interpersonal intelligence (capacity to detect and respond appropriately to the moods, motivations and desires of others)

- Intrapersonal (capacity to be self-aware and in tune with inner feelings, values, beliefs and thinking processes)

- Naturalist intelligence (ability to recognize and categorize plants, animals and other objects in nature)

- Existential intelligence (sensitivity and capacity to tackle deep questions about human existence such as, “What is the meaning of life? Why do we die? How did we get here?”

(“Tapping into Multiple Intelligences,” 2004)

Gardner (2013) asserts that regardless of which subject you teach—“the arts, the sciences, history, or math”—you should present learning materials in multiple ways. Gardner goes on to point out that anything you are deeply familiar with “you can describe and convey … in several ways. We teachers discover that sometimes our own mastery of a topic is tenuous, when a student asks us to convey the knowledge in another way and we are stumped.” Thus, conveying information in multiple ways not only helps students learn the material, it also helps educators increase and reinforce our mastery of the content.

… regardless of which subject you teach—“the arts, the sciences, history, or math”—you should present learning materials in multiple ways.

Gardner’s multiple intelligences theory can be used for curriculum development, planning instruction, selection of course activities, and related assessment strategies. Gardner points out that everyone has strengths and weaknesses in various intelligences, which is why educators should decide how best to present course material given the subject-matter and individual class of students. Indeed, instruction designed to help students learn material in multiple ways can trigger their confidence to develop areas in which they are not as strong. In the end, students’ learning is enhanced when instruction includes a range of meaningful and appropriate methods, activities, and assessments.

Multiple Intelligences are Not Learning Styles

While Gardner’s MI have been conflated with “learning styles,” Gardner himself denies that they are one in the same. The problem Gardner has expressed with the idea of “learning styles” is that the concept is ill defined and there “is not persuasive evidence that the learning style analysis produces more effective outcomes than a ‘one size fits all approach’” (as cited in Strauss, 2013). As former Assistant Director of Vanderbilt University’s Center for Teaching Nancy Chick (n.d.) pointed out, “Despite the popularity of learning styles and inventories such as the VARK, it’s important to know that there is no evidence to support the idea that matching activities to one’s learning style improves learning.” One tip Gardner offers educators is to “pluralize your teaching,” in other words to teach in multiple ways to help students learn, to “convey what it means to understand something well,” and to demonstrate your own understanding. He also recommends we “drop the term ‘styles.’ It will confuse others and it won’t help either you or your students” (as cited in Strauss, 2013).

… “pluralize your teaching,” in other words to teach in multiple ways to help students learn, to “convey what it means to understand something well,” and to demonstrate your own understanding.

Gardner himself asserts that educators should not follow one specific theory or educational innovation when designing instruction but instead employ customized goals and values appropriate to teaching, subject-matter, and student learning needs. Addressing the multiple intelligences can help instructors pluralize their instruction and methods of assessment and enrich student learning.

Chick, N. (n.d.). Learning styles . Retrieved from https://cft.vanderbilt.edu/guides-sub-pages/learning-styles-preferences/

Gardner, H. (2013). Frequently asked questions—Multiple intelligences and related educational topics. Retrieved from https://howardgardner01.files.wordpress.com/2012/06/faq_march2013.pdf

Strauss, V. (2013, Oct. 16). Howard Gardner: “Multiple intelligences” are not “learning styles.” The Washington Post . Retrieved from https://www.washingtonpost.com/news/answer-sheet/wp/2013/10/16/howard-gardner-multiple-intelligences-are-not-learning-styles/

Tapping into multiple intelligences . (2004). Retrieved from https://www.thirteen.org/edonline/concept2class/mi/index.html

Selected Resources

MI OASIS: The Official Authoritative Site of Multiple Intelligences. Access at https://www.multipleintelligencesoasis.org/

Suggested citation

Northern Illinois University Center for Innovative Teaching and Learning. (2020). Howard Gardner’s theory of multiple intelligences. In Instructional guide for university faculty and teaching assistants. Retrieved from https://www.niu.edu/citl/resources/guides/instructional-guide

Phone: 815-753-0595 Email: [email protected]

Connect with us on

Facebook page Twitter page YouTube page Instagram page LinkedIn page

BRIEF RESEARCH REPORT article

Discussion of teaching with multiple intelligences to corporate employees' learning achievement and learning motivation.

- 1 Fuzhou University of International Studies and Trade, Fuzhou, China

- 2 College of Business and Management, Xiamen Huaxia University, Fuzhou, China

- 3 School of Business, Fuzhou Institute of Technology, Fuzhou, China

- 4 Department of Business Administration, Social Enterprise Research Center, Fu Jen Catholic University, New Taipei City, Taiwan

- 5 Master Program in Entrepreneurial Management, National Yunlin University of Science and Technology, Yulin, Taiwan

The development of multiple intelligences used to focus on kindergartens and elementary schools as educational experts and officials considered that the development of students' multiple intelligences should be cultivated from childhood and slowly promoted to other levels. Nevertheless, the framework of multiple intelligences should not be simply promoted in kindergartens and elementary schools, but was also suitable in high schools, universities, and even graduate schools or in-service training. Taking employees in Southern Taiwan Science Park as the research subjects, total 314 employees in high-tech industry are preceded the 16-week (3 h per week for total 48 h) experimental teaching research. The research results show that (1) teaching with multiple intelligences would affect learning motivation, (2) teaching with multiple intelligences would affect learning achievement, and (3) learning motivation reveals remarkably positive effects on learning achievement. According to the results to proposed discussions, it is expected to help high-tech industry, when developing human resource potential, effectively well-utilize people's gifted uniqueness

Introduction

Domestic education system, for a long time, paid attention to intellectual education. In the reflection before education reform, it was discovered that over-emphasizing intellectual education resulted in many students being sacrificed under the education system. Under the education reform in past years, the situation is gradually improved. Everyone possesses distinct intelligences and various combination and application methods that multi-methods should be used for the evaluation. Such methods provide special children with the growth model to develop the potential. Teachers' teaching with multiple intelligences allows such students fully developing the potential. Multiple intelligences particularly emphasize the application of intelligence in real life situations that the integration of teaching with multiple intelligences could help teachers assist in students' learning with multiple instruction and students expand abilities beyond subjects emphasized in traditional education. It would help current teaching styles.

Everyone presents the unique operation method that, with proper encouragement and guidance, the intelligence could achieve certain standards. For this reason, multiple intelligences allow each student finding out the sky and reaching the goal of adaptive development. The emergence of knowledge-based economy in past years reveals the importance of human capital of a nation. In face of increasing employment population domestically, understanding the ability for the right job in the right place is an extremely important issue for individuals or enterprises. The development of multiple intelligences used to focus on kindergartens and elementary schools as educational experts and officials considered that the development of students' multiple intelligences should be cultivated from childhood and slowly promoted to other levels. For high and elementary school students, multiple intelligences could help teachers better understand students from the intelligence distribution of students. For instance, multiple intelligences could be utilized for digging out gifted students and further providing them with suitable development opportunities to make the growth. Besides, multiple intelligences could be used for supporting students with problems and adopting more suitable methods for their learning. Regarding research on multiple intelligences, Ronald et al. (2001) covered the research objects of kindergarten pupils, higher graders of elementary schools, and high school students as well as the research fields of foreign language vocabulary memory, motivation to learn, mathematical problem solving, and reading comprehension of English and mathematics. Such research findings showed that multiple intelligences applied teaching activities could significantly enhance students' learning achievement, promote the motivation to learn, enhance reading the comprehension, and even enhance the ability of cooperative learning with peers. Broadly speaking, the framework of multiple intelligences cannot be promoted simply in kindergartens and elementary schools, but are suitable for high schools, universities, and even graduate schools or in-service training. A lot of international MBA courses are added creative thinking to strengthen the development of adaptability and creativity in the new era. For this reason, teaching with multiple intelligences to corporate employees' learning achievement and learning motivation is discussed in this study, expecting to help high-tech industry effectively well-utilize people's gifted uniqueness in the challenge of developing human resource potential.

Literature Review

Simoncini et al. (2018) stated that teaching with multiple intelligences stressed on the provision of democratic, respectful, and multiple learning environment for each student being able to present the ability, self-affirm personal performance, and further induce strong learning interests to surpass the originally dominant intelligence field in learning outcome. Inan and Erkus (2017) indicated that using multiple intelligences for curriculum design could provide various intellectual learning activities and create the environment with which students were comfortable. Learning was the preparation for challenge; learners would develop by accepting challenges exceeding the current abilities. Encouraging students deeply and meaningfully to engage in the learned topics was the solid and durable learning basis for learning new affairs. The application of multiple intelligences and the creation of diverse classrooms to develop students' specialty allowed students maintaining learning motivation with active participation, building self-confidence, and developing self-motivation. Minnier et al. (2019) mentioned that the application of multiple intelligences to teaching was different from traditional teaching; teaching with multiple intelligences adopted multiple instruction strategies and activities. Many studies indicated that the application of multiple intelligences to teaching enhanced students' learning motivation and interests. The following hypothesis is therefore proposed in this study.

H1 : Teaching with multiple intelligences would affect learning motivation.

Moncada and Mire (2017) indicated that teachers had to know each student's strengths and traits and appreciate individual advantages to give guidance and inspiration in order to strengthen the learning confidence. Multiple intelligences reminded teachers to comprehend and apply diverse teaching methods, transform existing curricula, or units into multiple learning opportunities, as well as carefully consider the taught concepts and confirm the most appropriate intelligence for communicating the content before planning curricula in order to ensure the achievement of proper teaching goals and promote students' learning achievement. Awang et al. (2017) proposed that teaching with multiple intelligences could positively enhance students' academic performance to make progress on English listening, speaking, reading, and writing. After applying multiple intelligences to English teaching, students enhanced learning achievement, learning interests, and learning motivation. Several researchers proposed that students appeared positive change on the learning achievement. Khong et al. (2017) indicated in the research results that higher-grader students in elementary schools being taught science based on multiple intelligences outperformed those receiving traditional teaching. According, the following hypothesis is proposed in this study.

H2 : Teaching with multiple intelligences would affect learning achievement.

Russell et al. (2017) considered that the achievement of meaningful and effective learning to skillfully grasp the concept relied on students' intrinsic motivation, when students expected to acquire certain knowledge with e-learning. Khow and Visvanathan (2017) considered the value of e-learning that students could enhance learning achievement by acquiring good performance and presenting intrinsic motivation to contact broad professional knowledge/competence. Hunter and Hunter (2018) stated that students with high learning motivation presented more definite goals and strong desire to well-learn the learning content and showed higher expectation and better self-efficacy. It was also discovered that students with high learning motivation appear better performance, and students with intrinsic motivation outperformed those with extrinsic motivation. Consequently, the following hypothesis is proposed in this study.

H3 : Learning motivation presents significantly positive effects on learning achievement.

Methodology

Measurement of research variable, (1) teaching with multiple intelligences.

Referring to Minnier et al. (2019) , the following dimensions for the curriculum design of teaching with multiple intelligences, according to student needs, are proposed in this study.

1. Intrapersonal intelligence: Intrapersonal intelligence is defined as the intrapersonal ability according to individual self-knowing ability and self-perception to keenly and precisely perceive personal inner emotion, motivation, ability, intention, and desire.

2. Interpersonal intelligence: Intrapersonal intelligence is defined as being able to effectively perceive and discriminate others' emotion, affection, intention, feeling, motivation, and expectation as well as make proper responses to interpersonal relationship to further get along with people harmoniously.

3. Content-based curriculum: Content-based curriculum integrates knowledge and life, provides students with opportunities to apply knowledge, well-utilize community resources, and integrate community professional manpower for students learning with multiple intelligences and increasing learning channels.

4. Situated learning: Learning situations are co-constructed and maintained by teachers and students, are free, open, and cooperative, pay attention to overall conceptual knowledge orientation, and match students' sensory learning with teaching resources for learning in the real-life situation and respecting the difference in learners' learning outcome.

(2) Learning Motivation

According to the research of Cheng et al. (2018) , students' learning motivation is divided into intrinsic learning motivation orientation and extrinsic learning motivation orientation in this study, as below.

1. Intrinsic orientation: containing favor of challenging courses, regarding learning as interest and hobby, considering that learning could expand vision, being able to actively learn new courses, learning for developing self-potential and realizing ideas.

2. Extrinsic orientation: covering learning for receiving others' affirmation, acquiring better performance, passing examinations or evaluation, showing off to others, competing with classmates, obtaining appreciation and attention from elders or the opposite sex, preventing from punishment and scold, avoiding the shame of failure, and entering ideal schools in the future.

(3) Learning Achievement

Referring to Zebari et al. (2018) , the following dimensions for learning achievement are proposed in this study.

1. Learning effect-including test performance, time for completing schedule, and term performance.

2. Learning gain-containing learning satisfaction, achievement, and preference.

Method and Model

Structural equation model is used as the research method in this study and Amos is utilized as the statistical tool. Structural equation model (SEM), also named covariance structure analysis, is used for analyzing causality model and precedes path analysis (PA), factor analysis, regression analysis, and analysis of variance. Structural equation model consists of two parts. The first part, measurement model, aims to construct the latent variable model with observed variables to understand the relationship between observed variables and latent variables; the constructed mathematical model is Confirmatory Factor Analysis (CFA). The second part, Structure Model, mainly discusses the causality among latent variables with path analysis, where observed variables are used; latent variables are used for Structure Model.

Research Subject and Sampling Data

Aiming at employees in Southern Taiwan Science Park as the research objects, total 314 employees in high-tech industry are preceded the 30-week (2 h per week for total 30 h) experimental research. The questionnaire survey is preceded after the end of the 30-week course, and statistical methods are applied to test various hypotheses. Among the distributed 314 copies of questionnaire, 297 copies are valid, with the valid retrieval rate 95%.

Reliability and Validity Test

Reliability and validity are important measurement standards. Merely the data results acquired from the questionnaire design with reliability and validity present the research value. AMOS is used for Confirmatory Factor Analysis (CFA) in this study, and SPSS 21 is applied to calculate the reliability and validity to test the questionnaire scale achieving the reliability and validity standard.

Empirical Result

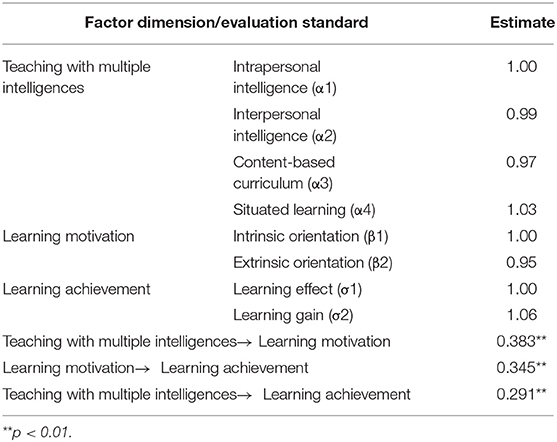

Factor analysis and validity analysis.

Based on factor loadings, all items in this study are preceded confirmatory analysis. The factor loadings should be higher than 0.7; if not, the item does not show the representativeness and is removed. The Confirmatory Factor Analysis results show that all factor loadings of teaching with multiple intelligences, learning motivation, and learning achievement conform to the standard (>0.7), revealing high validity of the questionnaire scale.

Cronbach's α is used in this study for evaluating reliability; Cronbach's α higher than 0.7 achieves the reliability standard, and the ideal value should be higher than 0.9. Cronbach's α of teaching with multiple intelligences, learning motivation, and learning achievement in this study is higher than the suggested threshold and with the lowest value up to 0.8, revealing high reliability of the questionnaire scale.

Test of Model Fit

“Maximum Likelihood” (ML) is utilized in this study for the estimation; the obtained Amos analysis results achieve convergence. The indicators standing for the external quality of model show (1) χ 2 ratio = χ 2 = 1.627, smaller than 3, (2) goodness-of-fit index GFI = 0.97, higher than 0.9 and adjusted goodness-of-fit index AGFI = 0.82, higher than 0.8, (3) root mean square residual RMR = 0.029, smaller than 0.05, and (4) incremental fit index 0.94, higher than 0.9. Overall speaking, the actual number of 297 samples is higher than the requirement for the basic number of samples, and the overall model fit indicators pass the test, fully reflecting good internal quality of the structural equation model.

Regarding the test of internal quality of structure, the squared multiple correlation (SMC) of manifest variables is higher than 0.5, revealing good measurement indicators of latent variables. Furthermore, latent variables of teaching with multiple intelligences, learning motivation, and learning achievement show the component reliability higher than 0.6 and the average variance extracted of dimensions is higher than 0.5, apparently meeting the requirement for the internal quality of model.

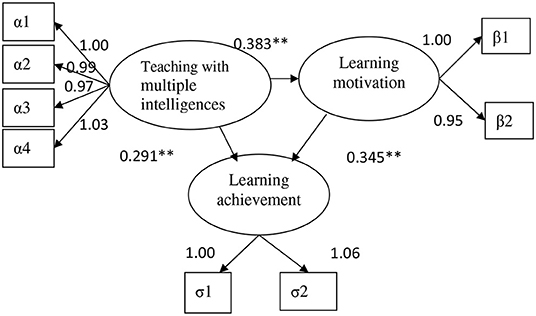

Test of Path Relationship

Latent variables of intrapersonal intelligence, intrinsic orientation, and learning effect are regarded as the reference indicators with fixed 1. From the causality path in Table 1 and Figure 1 , the estimates between other dimensions and variables appear significance. Interpersonal intelligence = 0.99 shows less explanatory power than intrapersonal intelligence, and learning gain = 1.06 presents better explanatory power than learning effect.

Table 1 . Overall linear structure model analysis result.

Figure 1 . Path relationship.

Teaching with multiple intelligences could effectively enhance the learning motivation of employees in high-tech industry to promote and continue the learning achievement. The research results are consistent with most of past research results ( Ikiz and Cakar, 2010 ; Mahasneh, 2013 ). As Akkuzu and Akçay (2011) revealed, teaching with multiple intelligences was more effective than traditional teaching styles and such activities were interesting to facilitate students' interests in participation in course activities. In this case, the application of teaching with multiple intelligences allows employees in high-tech industry preceding learning activity with the advantageous intelligence to be more confident of learning challenges, rather than being inoculated to result in getting half the results with double efforts for learning with weaker intelligence, and further help promote the performance of organizational learning. The use of computers is inevitable for modern people; the use of ppt, films, or mv could properly attract the attention of employees in high-tech industry. Well-begun is half done; besides, computer-assisted teaching could largely assist employees in more difficult intelligence activity design, such as space, natural observer, and music intelligence. Teachers therefore should flexibly apply such resources. Moreover, teachers should take a long-term view, rather than focusing on immediate results. The cultivation of employees' active learning and high learning motivation would multiply and endure the learning validity.

The research results reveal that Consistent with most past research results, it reveals that teaching with multiple intelligences indeed could effectively promote learning achievement and motivation to learn ( Gardner and Hatch, 1989 ; Barrington, 2004 ; Akkuzu and Akçay, 2011 ). Employees in high-tech industry remarkably enhance learning achievement and learning motivation after the teaching with multiple intelligences. In this case, relevant academic competition could be held in organizations with proper rewards to effectively apply the employees' learning effectiveness and increase the learning motivation. Different from traditional teaching, teaching with multiple intelligences, with more personal practice and participation, allows employees in high-tech industry grasping the learning, rather than simply accepting knowledge. As a result, employees would enhance self-efficacy. For instance, employees in high-tech industry, under group learning, observation, and brainstorming, would make progress on reports, and learning comprehension as well as deepen and broaden learning motivation. Teachers, during the instruction, should praise and encourage for the progress of employees, create low-pressure, relaxing, and comfortable learning environment, and give more learning confidence to strength the learning motivation of employees in high-tech industry.

Data Availability Statement

The original contributions presented in the study are included in the article/supplementary materials, further inquiries can be directed to the corresponding authors.

Ethics Statement

The present study was conducted in accordance with the recommendations of the Ethics Committee of the Fuzhou Institute of Technology, with written informed consent being obtained from all the participants. All the participants were asked to read and approve the ethical consent form before participating in the present study. The participants were also asked to follow the guidelines in the form in the research. The research protocol was approved by the Ethical Committee of the Fuzhou Institute of Technology.

Author Contributions

D-YL performed the initial analyses and wrote the manuscript. J-HC, C-MC, K-PH, and CJ assisted in the data collection and data analysis. All authors revised and approved the submitted version of the manuscript.

Conflict of Interest

The authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

Publisher's Note

All claims expressed in this article are solely those of the authors and do not necessarily represent those of their affiliated organizations, or those of the publisher, the editors and the reviewers. Any product that may be evaluated in this article, or claim that may be made by its manufacturer, is not guaranteed or endorsed by the publisher.

Acknowledgments

The authors thank the reviewers for their valuable comments.

Akkuzu, N., and Akçay, H. (2011). The design of a learning environment based on the theory of multiple intelligence and the study its effectiveness on the achievements, attitudes and retention of students. Proce. Comput. Sci . 3, 1003–1008. doi: 10.1016/j.procs.2010.12.165

CrossRef Full Text | Google Scholar

Awang, H., Samad, N. A., Mohd Faiz, N. Z., Roddin, R., and Kankia, J. D. (2017). Relationship between learning styles preferences and academic achievement. IOP Conf. Ser. Mater. Sci. Eng. 226:012193. doi: 10.1088/1757-899X/226/1/012193

Barrington, E. (2004). Teaching to student diversity in higher education: how multiple intelligences theory can help. Teach. High. Educ. 9, 421–434. doi: 10.1080/1356251042000252363

Cheng, P., Tan, L., Ning, P., Li, L., Gao, Y., Wu, Y., et al. (2018). Comparative effectiveness of published interventions for elderly fall prevention: a systematic review and network meta-analysis. Int. J. Environ. Res. Public Health 15:498. doi: 10.3390/ijerph15030498

PubMed Abstract | CrossRef Full Text | Google Scholar

Gardner, H., and Hatch, T. (1989). Multiple intelligences go to school: educational implications of the theory of multiple intelligences. Educ. Res . 18:4. doi: 10.2307/1176460

Hunter, J. L, and Hall, C.M. (2018). A survey of K-12 teachers' utilization of social networks as a professional resource. Educ. Inform. Technol. 23, 633–658. doi: 10.1007/s10639-017-9627-9

Ikiz, F., and Cakar, F. (2010). The relationship between multiple intelligences and academic achievements of second grade students. GüZ J . 2, 83–92.

Google Scholar

Inan, C., and Erkus, S. (2017). The effect of mathematical worksheets based on multiple intelligences theory on the academic achievement of the students in the 4th grade primary school. Universal J. Educ. Res. 5, 1372–1377. doi: 10.13189/ujer.2017.050810

Khong, L., Berlach, R. G., Hill, K. D., and Hill, A. M. (2017). Can peer education improve beliefs, knowledge, motivation and intention to engage in falls prevention amongst community-dwelling older adults? Eur. J. Ageing 14, 243–255. doi: 10.1007/s10433-016-0408-x

Khow, K. S. F., and Visvanathan, R. (2017). Falls in the aging population. Clin. Geriatr. Med. 33, 357–368. doi: 10.1016/j.cger.2017.03.002

Mahasneh, A. M. (2013). The relationship between multiple intelligence and self-efficacy among sample of Hashemite university students. Int. J. Educ. Res. 1, 1–12.

Minnier, W., Leggett, M., Persaud, I., and Breda, K. (2019). Four smart steps: fall prevention for community-dwelling older adults. Creat. Nurs. 25, 169–175. doi: 10.1891/1078-4535.25.2.169

Moncada, L. V. V., and Mire, L. G. (2017). Preventing falls in older persons. Am. Fam. Phys. 96, 240–247.

Ronald, E., Riggio, S., Elaine, M., and Francis, J. P. (2001). Multiple intelligences and leadership: an overview. Mult. Intelligences Leadersh. 15–20. doi: 10.4324/9781410606495-6

Russell, K., Taing, D., and Roy, J. (2017). Measurement of fall prevention awareness and behaviours among older adults at home. Can. J. Aging 36, 522–535. doi: 10.1017/S0714980817000332

Simoncini, K., Elliott, S., Carr, V., Manson, E., Simeon, L., and Sawi, J. (2018). Children's right to play in Papua New Guinea: insights from children in years 3–8. Int. J. Play 7, 146–160. doi: 10.1080/21594937.2018.1495993

Zebari, S., Allo, H., and Mohammedzadeh, B. (2018). Multiple intelligences-based planning of EFL classes. Adv. Lang. Lit. Stud. 9, 98–103. doi: 10.7575/aiac.alls.v.9n.2p.98

Keywords: multiple intelligences, learning achievement, learning motivation, content-based curriculum, situated learning

Citation: Lei D-Y, Cheng J-H, Chen C-M, Huang K-P and James Chou C (2021) Discussion of Teaching With Multiple Intelligences to Corporate Employees' Learning Achievement and Learning Motivation. Front. Psychol. 12:770473. doi: 10.3389/fpsyg.2021.770473

Received: 03 September 2021; Accepted: 20 September 2021; Published: 18 October 2021.

Reviewed by:

Copyright © 2021 Lei, Cheng, Chen, Huang and James Chou. This is an open-access article distributed under the terms of the Creative Commons Attribution License (CC BY) . The use, distribution or reproduction in other forums is permitted, provided the original author(s) and the copyright owner(s) are credited and that the original publication in this journal is cited, in accordance with accepted academic practice. No use, distribution or reproduction is permitted which does not comply with these terms.

*Correspondence: Jui-Hsi Cheng, ray1806@gmail.com

Disclaimer: All claims expressed in this article are solely those of the authors and do not necessarily represent those of their affiliated organizations, or those of the publisher, the editors and the reviewers. Any product that may be evaluated in this article or claim that may be made by its manufacturer is not guaranteed or endorsed by the publisher.

Online ordering is currently unavailable due to technical issues. We apologise for any delays responding to customers while we resolve this. For further updates please visit our website: https://www.cambridge.org/news-and-insights/technical-incident

We use cookies to distinguish you from other users and to provide you with a better experience on our websites. Close this message to accept cookies or find out how to manage your cookie settings .

Login Alert

- > The Cambridge Handbook of Intelligence

- > The Theory of Multiple Intelligences

Book contents

- The Cambridge Handbook of Intelligence

- Copyright page

- Contributors

- Part I Intelligence and Its Measurement

- Part II Development of Intelligence

- Part III Intelligence and Group Differences

- Part IV Biology of Intelligence

- Part V Intelligence and Information Processing

- Part VI Kinds of Intelligence

- 27 The Theory of Multiple Intelligences

- 28 The Augmented Theory of Successful Intelligence

- 29 Emotional Intelligence

- 30 Practical Intelligence

- 31 Social Intelligence

- 32 Collective Intelligence

- 33 Leadership Intelligence

- 34 Cultural Intelligence

- 35 Mating Intelligence

- 36 Consumer and Marketer Intelligence

- Part VII Intelligence and Its Role in Society

- Part VIII Intelligence and Allied Constructs

- Part IX Folk Conceptions of Intelligence

- Part X Conclusion

- Author Index

- Subject Index

27 - The Theory of Multiple Intelligences

from Part VI - Kinds of Intelligence

Published online by Cambridge University Press: 13 December 2019

The theory of multiple intelligences (MI) was set forth in 1983 by Howard Gardner. The theory holds that all individuals have several, relatively autonomous intelligences that they deploy in varying combinations to solve problems or create products that are valued in one or more cultures. Together, the intelligences underlie the range of adult roles found across cultures. MI thus diverges from theories entailing general intelligence, or g, which hold that a single mental capacity is central to all human problem-solving and that this capacity can be ascertained through psychometric assessment. This chapter presents the evidence and criteria used to develop MI, clarifies misconceptions about the theory, and examines critiques of the theory. It considers Einstein’s typology of scientific theories through which it is possible to understand MI as a “constructive theory.” It then examines issues of assessment entailing MI and educational applications of the theory.

Access options

Save book to kindle.

To save this book to your Kindle, first ensure [email protected] is added to your Approved Personal Document E-mail List under your Personal Document Settings on the Manage Your Content and Devices page of your Amazon account. Then enter the ‘name’ part of your Kindle email address below. Find out more about saving to your Kindle .

Note you can select to save to either the @free.kindle.com or @kindle.com variations. ‘@free.kindle.com’ emails are free but can only be saved to your device when it is connected to wi-fi. ‘@kindle.com’ emails can be delivered even when you are not connected to wi-fi, but note that service fees apply.

Find out more about the Kindle Personal Document Service .

- The Theory of Multiple Intelligences

- By Mindy L. Kornhaber

- Edited by Robert J. Sternberg , Cornell University, New York

- Book: The Cambridge Handbook of Intelligence

- Online publication: 13 December 2019

- Chapter DOI: https://doi.org/10.1017/9781108770422.028

Save book to Dropbox

To save content items to your account, please confirm that you agree to abide by our usage policies. If this is the first time you use this feature, you will be asked to authorise Cambridge Core to connect with your account. Find out more about saving content to Dropbox .

Save book to Google Drive

To save content items to your account, please confirm that you agree to abide by our usage policies. If this is the first time you use this feature, you will be asked to authorise Cambridge Core to connect with your account. Find out more about saving content to Google Drive .

Gardner’s multiple intelligences in science learning: A literature review

- Article contents

- Figures & tables

- Supplementary Data

- Peer Review

- Reprints and Permissions

- Cite Icon Cite

- Search Site

Fibriyana Safitri , Dadi Rusdiana , Wawan Setiawan; Gardner’s multiple intelligences in science learning: A literature review. AIP Conf. Proc. 28 April 2023; 2619 (1): 100014. https://doi.org/10.1063/5.0122560

Download citation file:

- Ris (Zotero)

- Reference Manager

The purpose of this article is to review articles related to multiple intelligences in learning and figure out the multiple intelligences approach in learning activities and several bits of intelligence that play an important role in science learning. This paper is the result of a review of 40 articles related to learning activities that use multiple intelligence-based approaches, media, and learning models, published from 2011 to 2021. The multiple intelligences theory was put forward by Howard Gardner, an expert in education and psychology. There are nine types of intelligence based on Gardner’s theory, namely: verbal-linguistic intelligence, visual-spatial intelligence, musical intelligence, logical-mathematics intelligence, interpersonal intelligence, intrapersonal intelligence, bodily-kinesthetic intelligence, naturalist intelligence, and existential intelligence, which have different characteristics. The method used in this study is a systematic literature review with the following stages: determining research questions; determining criteria; generating a framework for articles; searching, filtering, and selecting; analyzing and interpreting the content of each reviewed article; article writing, and publishing. This study discusses Gardner’s multiple intelligence theory, the multiple intelligences approach in learning activities, and the most influential intelligence in science learning. The results show that several bits of intelligence play an important role in science learning.

Sign in via your Institution

Citing articles via, publish with us - request a quote.

Sign up for alerts

- Online ISSN 1551-7616

- Print ISSN 0094-243X

- For Researchers

- For Librarians

- For Advertisers

- Our Publishing Partners

- Physics Today

- Conference Proceedings

- Special Topics

pubs.aip.org

- Privacy Policy

- Terms of Use

Connect with AIP Publishing

This feature is available to subscribers only.

Sign In or Create an Account

An official website of the United States government

The .gov means it’s official. Federal government websites often end in .gov or .mil. Before sharing sensitive information, make sure you’re on a federal government site.

The site is secure. The https:// ensures that you are connecting to the official website and that any information you provide is encrypted and transmitted securely.

- Publications

- Account settings

Preview improvements coming to the PMC website in October 2024. Learn More or Try it out now .

- Advanced Search

- Journal List

- Front Psychol

“Neuromyths” and Multiple Intelligences (MI) Theory: A Comment on Gardner, 2020

Introduction.

Neuromyths are misconceptions about the brain and learning. The most pervasive neuromyths contain a “kernel of truth” (Grospietsch and Mayer, 2018 ). For instance, consider the following popular neuromyth: People are either “left-brained” or “right-brained,” which helps to explain individual differences in learning . On the one hand, classical neuroscience findings did provide solid basic evidence that the human brain displays a certain degree of functional hemispheric lateralization (Gazzaniga et al., 1962 , 1963 ). However, on the other hand, the idea of a “dominant” cerebral hemisphere is not supported by neuroscience (Nielsen et al., 2013 ). Due to fatal mutations from kernels of truth, neuromyths are typically defined as distortions, oversimplifications, or abusive extrapolations of well-established neuroscientific facts (OECD, 2002 ; Pasquinelli, 2012 ; Howard-Jones, 2014 ).

In the past decade, numerous surveys have been conducted in more than 20 countries around the world to measure the prevalence of neuromyth beliefs among educators (Torrijos-Muelas et al., 2021 ). A large-scale survey conducted in Quebec, Canada, by Blanchette Sarrasin et al. ( 2019 ) revealed that 68% of teachers somewhat or strongly agreed (rating of 4 or 5 on a 5-point scale) with the following neuromyth statement:

Students have a predominant intelligence profile, for example logico-mathematical, musical, or interpersonal, which must be considered in teaching .

This is not an idiosyncratic case in the field (see Table 1 ). In another survey conducted in Spain, Ferrero et al. ( 2020 ) reported that teachers gave an average rating of 4.47 [on a 5-point scale, from 1 ( definitely false) to 5 (definitely true )] to a closely similar neuromyth statement:

Prevalence of beliefs, among educators, about the false claim that tailoring instruction to pupils' MI intelligence profiles promotes learning, in different countries around the world.

| Blanchette Sarrasin et al. ( ) | Quebec, Canada | . | In-service teachers ( = 972) | 68% of teachers or agreed (rating of 4 or 5 on a 5-point scale) with the statement. |

| Craig et al. ( ) | Canada/USA | (inverted item) | In-service teachers ( = 253) | 24.9% of correct answers ( ). |

| Ferrero et al. ( ) | Spain | . | In-service teachers ( = 45) | = 4.47 on a 5-point scale, from 1 ( ) to 5 ( ). |

| Rogers and Cheung ( ) | Hong Kong | . | Pre-service teachers ( = 65) | = 4.57 on a 6-point scale, from 1 ( ) to 6 ( ). |

| Ruhaak and Cook ( ) | USA | . | Special education pre-service teachers ( = 129) | 90% of prospective teachers will or implement the instructional practice (rating of 3 or 4 on a 4-point scale). |

Adapting teaching methods to the “multiple intelligences” of students leads to better learning .

The opening survey statement from Blanchette Sarrasin et al. ( 2019 ) caught Howard Gardner's attention, because it clearly draws from his Multiple Intelligences (henceforth MI) theory (Gardner, 1983 ). In a recent paper, Gardner ( 2020 ) says he was disturbed by this so-called “neuromyth,” both because it says nothing about the brain, and because it is not an idea that he has put forth or defended. On that basis, Gardner ( 2020 ) argues that MI theory does not qualify as a neuromyth. According to the author of Frames of Mind , some years ago, there may have been merit in exposing neuromyths, but the practice has gone too far and has now become problematic rather than helpful.

In this opinion paper, I first challenge Gardner's ( 2020 ) view that MI theory contains no “neuro.” Then, I highlight the fact that Gardner and his research team spent an entire decade, through the Spectrum Project , contemplating the hypothesis—embedded into the opening survey statement—that matching modes of instruction to MI intelligence profiles promotes learning. When taken for granted, such an unproven research hypothesis is considered as a false belief—a neuromyth derived from MI theory. Then, I argue that research aimed at testing the MI–instruction “matching” hypothesis is still hampered by a lack of satisfactory measures of MI intelligence profiles. Finally, I expose how Gardner's ( 2020 ) position may, paradoxically, entertain the “problematic” neuromyth. To foster a more constructive dialog between scientists and educators, I follow Gardner's ( 2020 ) advice to properly qualify (i.e., to debunk) the survey statement, in terms of both robustness and caveats.

Biological Basis for Specialized Intelligences

Gardner ( 2020 ) states that “there is no mention of the brain” in his original work, insisting that “MI is a psychological theory, pure and simple” (p. 3). Because MI theory contains no “neuro,” he claims, there is no reason why it should be associated with the “provocative and contentious neuromyth” term. However, Gardner has typically called MI “a psychobiological theory: psychological because it is a theory of the mind, biological because it privileges information about the brain, the nervous system, and ultimately, [he] believe[s], the human genome” (Gardner, 2011b , p. 7). In the opening chapters of Frames of Mind , after disposing of traditional, IQ theories of intelligence, Gardner ( 1983 ) draws from brain science of the day to posit the basic premise of MI theory—that intelligences are distinct computational capacities that have emerged, over the course of evolution and across cultures, from the human cerebral cortex:

We find, from recent work in neurology, increasingly persuasive evidence for functional units in the nervous systems. There are units subserving microscopic abilities in the individual columns of the sensory or frontal areas; and there are much larger units, visible to inspection, which serve more complex and molar human functions, like linguistic or spatial processing. These suggest a biological basis for specialized intelligences (p. 57).

Such neurological evidence led Gardner ( 1983 ) to include potential isolation by brain damage as one of eight criteria—actually “the single most instructive line of evidence” (p. 63)—to define an intelligence. Critical insights for MI theory also came from Gardner's earlier neuropsychological research conducted in the 1970s on brain-damaged patients suffering from aphasia (Gardner, 2011b , 2016 ). Consistent with intelligences as biopsychological potentials to process information , Davis et al. ( 2011 ) noted that it would be “desirable to secure an atlas of the neural correlates of each of the intelligences” (p. 495) and current neuroscientific investigations of MI theory are undergoing in that direction. For instance, a brain lesion restricted to the left parietal lobe would selectively impair the capacity to discriminate living from non-living entities, i.e., naturalistic intelligence (Shearer and Karanian, 2017 ).

But even with no “neuro” at all, MI theory would still qualify as a potential source of neuromyths, as any scientific theory could—be it psychological, neurological, or a mix of both. Myths may have nothing to do with the brain, but are, nonetheless, myths. Over time, the term “neuromyth” has become a common umbrella to a wide range of unsubstantiated claims, especially in the education field. Some of those claims clearly evoke the brain (e.g., We only use 10% of our brain) , while others do not (e.g., Listening to Mozart's music makes children smarter ). Would it be more appropriate to drop the “neuro” prefix and collectively call them “edumyths”? Actually, it does not matter. They are myths.

Above all, the primary aim of MI theory was to expand the traditional, narrow IQ concept of intelligence to the whole spectrum of brain computational powers, not to provide brain-based educational recommendations. The basic idea of MI theory is that Homo sapiens is biologically endowed with a set of relatively autonomous mental tools (termed “intelligences”) that can be activated to solve problems or to fashion products that are of cultural value. MI theory posits that every individual has, at their disposal, a full intellectual profile of eight intelligences. From one individual to another, some intelligences exhibit low, some exhibit average, and some others exhibit strong biopsychological potentials, but the whole MI intelligence profile—a spectrum of brain computational powers working in synergy—is mobilized to adapt Homo sapiens to newly encountered, culture-bound situations.

The Elusive Quest for Optimal Matching

Unlike Gardner's ( 2020 ) allegation, the claim in the opening survey statement is not that MI theory is a neuromyth. There has been considerable progress in brain science over the past four decades, and neurological underpinnings of the original rendition of MI theory (Gardner, 1983 ) might need an update (Gardner, 2016 ), but MI theory is still a plausible, legitimate scientific theory of intelligence. The false claim in the opening survey statement is that tailoring instruction to pupils' MI intelligence profiles promotes learning. Gardner ( 2020 ) states that he has “gone to great pains to emphasize that even if the theory is plausible, no educational recommendations follow directly from it” (p. 3). However, since the inception of MI theory some 40 years ago, regarding applications of MI theory in education, Gardner oscillates between two views: the “Rorschach” view and the “matching” view.

According to the “Rorschach” view, defended by Gardner ( 2020 ), no direct educational implications derive from research findings. Cultural values always interface the leap from science to practice. In this view, MI theory is a catalyst for reflection on a pluralistic, rather than a unitary, view of intelligence (Gardner, 1995a ). To use Gardner's ( 2006 ) analogy, from the teachers' standpoint, MI theory is an educational Rorschach test, a backdrop “to support almost any pet educational idea that they had” (Gardner, 2011b , p. 5). MI theory implies only two non-prescriptive teaching practices: “individualizing” and “pluralizing.” By using multiple “entry points” (presenting the teaching materials in more than one way), teachers might activate all intelligences and foster optimal learning, “since some individuals learn better through stories, others through work of art, or hands-on activities” (Gardner, 2011b , p. 7).

According to the alternative, “matching” view, clearly embedded in the opening neuromyth statement, Gardner ( 2020 ) states that it is “not an idea that [he] has put forth or defended” (p. 2). However, in the closing chapter of Frames of Mind , from a purely speculative and prospective standpoint, Gardner ( 1983 ) is quite sympathetic to the idea of matching teaching materials and modes of instruction to MI intelligence profiles:

Educational scholars nonetheless cling to the vision of the optimal match between student and material. In my own view, this tenacity is legitimate: after all, the science of educational psychology is still young; and in the wake of superior conceptualizations and finer measures [emphasis mine], the practice of matching the individual learner's profile to the materials and modes of instruction may still be validated. Moreover, if one adopts M.I. theory, the options for such matches increase: as I have already noted, it is possible that the intelligences can function both as subject matters in themselves and as the preferred means for inculcating diverse subject matter (p. 390).

Albeit speculative, and much to Gardner's surprise, these few lines have attracted tremendous interest in the education field. But testing the matching hypothesis required, in the first place, “finer measures” of MI intelligence profiles. Gardner ( 1992 ) proposed, as an alternative to IQ-like paper-and-pencil (standardized) intelligence tests, natural observations of Homo sapiens freely evolving in ecologically valid, culturally meaningful contexts. For instance, to measure spatial intelligence , “one should allow an individual to explore a terrain for a while and see whether she can find her way around it reliably” (Gardner, 1995b , p. 202). Gardner and his research team spent an entire decade, after the publication of Frames of Mind , exploring the plausibility of a MI theory-based “child-centered” learning program. Their most ambitious initiative was the Spectrum Project , aimed at creating a museum-like, rich environment for children to deploy their biopsychological potentials (intelligences). A set of 15 learning activities covering seven knowledge domains was created to provide a contextually valid assessment battery of MI intelligence profiles. For instance, to assess interpersonal intelligence , children manipulated figures in a scaled-down, 3D replica of their classroom (Chen and Gardner, 2012 ). The distribution of strengths and weaknesses across the range of intelligences was called the Spectrum profile . The ultimate goal was to develop individualized educational interventions adapted to MI intelligence profiles.

However, MI theory does not only posit the existence of eight neurologically plausible intelligences, it also posits that each individual actually combines several intelligences to tackle any given task, making it unlikely for a test to capture purely specific intelligence strengths and weaknesses (e.g., a test that would isolate bodily-kinesthetic from musical, spatial, and interpersonal intelligences, while observing an individual dancing the tango). Although the 15 assessment tasks from the Spectrum battery have been “shown to demonstrate reliability” (Davis et al., 2011 , p. 496), valid measures of single or multiple deployment of the eight intelligences are still unsettled:

Direct experimental tests of the [MI] theory are difficult to implement and so the status of the theory within academic psychology remains indeterminate. The biological basis of the theory—its neural and genetic correlates—should be clarified in the coming years. But in the absence of consensually agreed upon measures of the intelligences, either individually or in conjunction with one another, the psychological validity of the theory will continue to be elusive (Davis et al., 2011 , p. 498).

Reflecting back on assessment tools for the multiple intelligences, Gardner ( 2016 ) admitted that he has “not devoted significant effort to creating such tests” (p. 169). In light of the enormous investment of time and money, he did not want himself to be “in the assessment business” (Gardner, 2011a , p. xiii). Above all, measuring multiple intelligences is inconsistent with Gardner's critique of the traditional IQ theories of intelligence and, for that reason, he shows “reluctance to create a new kind a strait jacket (Johnny is musically smart but spatially dumb )” (Gardner, 2011b , p. 5).

Accordingly, the opening survey statement is considered as a neuromyth because of a lack of compelling evidence—mainly due to unsatisfactory measures of MI intelligence profiles—that matching modes of instruction to MI intelligence profiles promotes learning. This intuitively appealing hypothesis, contemplated by Gardner's research team at some point (the Spectrum Project ) but still open to scientific inquiry, has somehow been taken for granted by laypersons and, over time, embedded into popular culture. In other words, it became a neuromyth.

Entertaining the “Problematic” Neuromyth

Gardner ( 2020 ) blames survey designers for putting up statements “conflating science and practice” and for creating rather than exposing neuromyths. He warns that by “waving the provocative neuromyth flag” with the opening survey statement, the baby (MI theory) might be thrown out with the bathwater (unsubstantiated educational claims derived from it).

First, neither Blanchette Sarrasin et al. ( 2019 ) nor other researchers in the field deliberately put up, in their respective surveys, neuromyth statements. Neuromyths are creatures of their own, to be chased, not created. Twenty-five years ago, Gardner ( 1995b ) debunked seven common myths that have grown up from MI theory. Myth #3 (“Multiple intelligences are learning styles”) was so persistent that Gardner ( 2013 ) found it necessary to debunk it once again in the new millennium. Survey designers simply exposed yet another, very prevalent myth: Tailoring instruction to pupils' MI intelligence profiles promotes learning.

Second, any scientific theory is a potential source of neuromyths. As noted by Geake ( 2008 ), the most pervasive neuromyths are ingrained into valid science. Is Roger Sperry's Nobel Prize at stake just because abusive extrapolations of his findings on functional hemispheric lateralization have given rise to one of the most pervasive neuromyths (“left-brained”—“right-brained” people)? By exposing such a popular neuromyth, might the baby (Sperry's contributions to neuroscience) be thrown out with the bathwater? The scientific integrity of MI theory cannot be harmed by the “problematic” neuromyth. Legitimate scientific theories and discoveries are challenged by empirical scrutiny, not by false beliefs loosely inspired from them.

Gardner ( 2020 ) argues that the way claims are conveyed in neuromyth survey statements (in an all-or-none, true/false fashion) is deceptive. To foster a more constructive dialog between scientists and educators, he advocates that research findings with potential educational implications should be properly qualified , in terms of both robustness and caveats. Surprisingly, rather than qualifying the message (the false claim in the opening survey statement), Gardner ( 2020 ) shoots the messengers (survey designers). A “more constructive” approach would be (1) to underline the scientific robustness of MI theory—its neurological plausibility (Posner, 2004 ) and (2) to disclose caveats pertaining to direct application of MI theory in educational settings, most notably that research aimed at testing the MI–instruction “matching” hypothesis is still hampered by a lack of consensually agreed upon measures of MI intelligence profiles (Davis et al., 2011 ). By shooting the messengers rather than qualifying the message (debunking yet another common myth that has grown up from MI theory), Gardner ( 2020 ) refrains from pulling the bathtub plug and entertains unsubstantiated educational implications of a legitimate scientific theory of intelligence.

Author Contributions

The author confirms being the sole contributor of this work and has approved it for publication.

Conflict of Interest

The author declares that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

Publisher's Note

All claims expressed in this article are solely those of the authors and do not necessarily represent those of their affiliated organizations, or those of the publisher, the editors and the reviewers. Any product that may be evaluated in this article, or claim that may be made by its manufacturer, is not guaranteed or endorsed by the publisher.

Acknowledgments

I am thankful to Liliane Lalonde for her help with the English language.

Funding. This work was supported by the Canada Foundation for Innovation John R. Evans Leaders Fund grant 18356.

- Blanchette Sarrasin J., Riopel M., Masson S. (2019). Neuromyths and their origin among teachers in Quebec . Mind Brain Educ. 13 , 100–109. 10.1111/mbe.12193 [ CrossRef ] [ Google Scholar ]

- Chen J.-Q., Gardner H. (2012). Assessment of intellectual profile: a perspective from multiple-intelligences theory , in Contemporary Intellectual Assessment: Theories, Tests, and Issues (3rd ed.) , eds Flanagan D. P., Harrison P. L. (New York, NY: Guilford Press; ), 145–155. [ Google Scholar ]

- Craig H. L., Wilcox G., Makarenko E. M., MacMaster F. P. (2021). Continued educational neuromyth belief in pre- and in-service teachers: a call for de-implementation action for school psychologists . Can. J. Sch. Psychol. 36 , 127–141. 10.1177/0829573520979605 [ CrossRef ] [ Google Scholar ]

- Davis K., Christodoulou J., Seider S., Gardner H. (2011). The theory of multiple intelligences , in The Cambridge Handbook of Intelligence , eds Sternberg R. J., Kaufman S. B. (New York, NY: Cambridge University Press; ), 485–503. [ Google Scholar ]

- Ferrero M., Hardwicke T. E., Konstantinidis E., Vadillo M. A. (2020). The effectiveness of refutation texts to correct misconceptions among educators . J. Exp. Psychol. Appl. 26 , 411–421. 10.1037/xap0000258 [ PubMed ] [ CrossRef ] [ Google Scholar ]

- Gardner H. (1983). Frames of Mind: A Theory of Multiple Intelligences . New York, NY: Basic Books. [ Google Scholar ]

- Gardner H. (1992). Assessment in context: the alternative to standardized testing , in Changing assessments: alternative views of aptitude, achievement, and instruction , eds Gifford B. R., O'Connor M. C. (Boston, MA: Kluwer; ), 77–119. [ Google Scholar ]

- Gardner H. (1995a). “Multiple intelligences” as a catalyst . Engl. J. 84 , 16–18. 10.2307/821182 [ CrossRef ] [ Google Scholar ]

- Gardner H. (1995b). Reflections on multiple intelligences: myths and message . Phi Delta Kappan 77 , 200–209. [ Google Scholar ]

- Gardner H. (2006). Multiple Intelligences: New Horizons in Theory and Practice . New York, NY: Basic Books. [ Google Scholar ]

- Gardner H. (2011a). Frames of mind: a theory of multiple intelligences (30th anniversary ed.) . New York, NY: Basic Books. [ Google Scholar ]

- Gardner H. (2011b). The theory of multiple intelligences: as psychology, as education, as social science [Paper presentation]. José Cela University, Madrid, Spain . Available online at: https://howardgardner01.files.wordpress.com/2012/06/473-madrid-oct-22-2011.pdf (accessed October 22, 2011).

- Gardner H. (2013). “Multiple intelligences” are not “learning styles.” The Washington Post . Available online at: https://www.washingtonpost.com/news/answer-sheet/wp/2013/10/16/howard-gardner-multiple-intelligences-are-not-learning-styles/ (accessed October 16, 2013).

- Gardner H. (2016). Multiple intelligences: prelude, theory, and aftermath , in Scientists Making a Difference: One Hundred Eminent Behavioral and Brain Scientists Talk About Their Most Important Contributions , eds Sternberg R. J., Fiske S. T., Foss D. J. (New York, NY: Cambridge University Press; ) 167–170. [ Google Scholar ]

- Gardner H. (2020). “Neuromyths”: a critical consideration . Mind Brain Educ. 14 , 2–4. 10.1111/mbe.12229 [ CrossRef ] [ Google Scholar ]

- Gazzaniga M. S., Bogen J. E., Sperry R. W. (1962). Some functional effects of sectioning the cerebral commissures in man . Proc. Natl. Acad. Sci. U.S.A. 48 , 1765–1769. 10.1073/pnas.48.10.1765 [ PMC free article ] [ PubMed ] [ CrossRef ] [ Google Scholar ]

- Gazzaniga M. S., Bogen J. E., Sperry R. W. (1963). Laterality effects in somesthesis following cerebral commissurotomy in man . Neuropsychologia 1 , 209–215. 10.1016/0028-3932(63)90016-2 [ CrossRef ] [ Google Scholar ]

- Geake J. (2008). Neuromythologies in education . Educational Research 50 , 123–133. 10.1080/00131880802082518 [ CrossRef ] [ Google Scholar ]

- Grospietsch F., Mayer J. (2018). Professionalizing pre-service biology teachers' misconceptions about learning and the brain through conceptual change . Educ. Sci. 8 :120. 10.3390/educsci8030120 [ CrossRef ] [ Google Scholar ]

- Howard-Jones P. A. (2014). Neuroscience and education: myths and messages . Nat. Rev. Neurosci. 15 , 817–824. 10.1038/nrn3817 [ PubMed ] [ CrossRef ] [ Google Scholar ]

- Nielsen J. A., Zielinski B. A., Ferguson M. A., Lainhart J. E., Anderson J. S. (2013). An evaluation of the left-brain vs. right-brain hypothesis with resting state functional connectivity magnetic resonance imaging . PLoS ONE 8 :e71275. 10.1371/journal.pone.0071275 [ PMC free article ] [ PubMed ] [ CrossRef ] [ Google Scholar ]

- Organisation for Economic Co-operation and Development (OECD) (2002). Understanding the Brain: Towards a New Learning Science . Paris: OECD Publishing. [ Google Scholar ]

- Pasquinelli E. (2012). Neuromyths: why do they exist and persist? Brain Mind Educ. 6 , 89–96. 10.1111/j.1751-228X.2012.01141.x [ CrossRef ] [ Google Scholar ]

- Posner M. I. (2004). Neural systems and individual differences . Teach. Coll. Rec. 106 , 24–30. 10.1111/j.1467-9620.2004.00314.x [ CrossRef ] [ Google Scholar ]

- Rogers J., Cheung A. (2020). Pre-service teacher education may perpetuate myths about teaching and learning . J. Educ. Teach. 46 , 417–420. 10.1080/02607476.2020.1766835 [ CrossRef ] [ Google Scholar ]

- Ruhaak A. E., Cook B. G. (2018). The prevalence of educational neuromyths among pre-service special education teachers . Mind Brain Educ. 12 , 155–161. 10.1111/mbe.12181 [ CrossRef ] [ Google Scholar ]

- Shearer C. B., Karanian J. M. (2017). The neuroscience of intelligence: empirical support for the theory of multiple intelligences? Trends Neurosci. Educ. 6 , 211–223. 10.1016/j.tine.2017.02.002 [ CrossRef ] [ Google Scholar ]

- Torrijos-Muelas M., González-Víllora S., Bodoque-Osma A. R. (2021). The persistence of neuromyths in the educational settings: a systematic review . Front. Psychol. 11 :591923. 10.3389/fpsyg.2020.591923 [ PMC free article ] [ PubMed ] [ CrossRef ] [ Google Scholar ]

The Lasting Impact of Multiple Intelligences

In 1983, in one of the most influential books in a peerlessly influential career, Howard Gardner upended popularly accepted notions of how children think and learn. He proposed, in Frames of Mind , that there was not just a single intelligence that could be measured by one IQ test, but multiple intelligences — many ways of learning and knowing.

With his best-known work, Howard Gardner shifted the paradigm and ushered in an era of personalized learning.

The notion of multiple intelligences — and Gardner’s follow-up ideas about teaching individual students in the ways they can best learn, and teaching important concepts in multiple ways, for many access points — shifted the paradigm, ushering in an era of personalized learning whose promise is still being explored.

Gardner never rested at multiple intelligences. In an award-winning career — which has included MacArthur and Guggenheim fellowships, the University of Louisville’s Grawemeyer Award in Education, and innumerable honorary degrees — he’s focused on ethical development , citizenship (including digital citizenship), professionalism, and the value of college and the liberal arts . He may have retired from teaching in 2019, but his work continues. – Video directed by Jill Anderson, produced by Elio Pajares

Learn More and Connect

Visit Project Zero's website .

Read more about Howard Gardner's intellectual and pedagogical legacy .

Learn about Gardner's latest research on the future of college .

The Theory of Multiple Intelligences

- January 2011

- In book: Cambridge Handbook of Intelligence (pp.485-503)

- Chapter: 24

- Publisher: Cambridge University Press

- Editors: R.J. Sternberg, S.B. Kaufman

- University of Washington Seattle

- MGH Institute for Health Professions

- Boston College

- This person is not on ResearchGate, or hasn't claimed this research yet.

Discover the world's research

- 25+ million members

- 160+ million publication pages

- 2.3+ billion citations

- Daniel Monteiro dos Santos

- Anderson Silva Matos

- Mikhail Lazarev

- Debbie Huber

- Eka Murdani

- Rustam Rustam

- Jukka Lehtoranta

- Tiina Korhonen

- Makarand R. Velankar

- Parikshit N. Mahalle

- ANN OPER RES

- Mahnoor Amir

- Natalie Hassan

- Usama Khalid

- Margaret L. Adams

- David Henry Feldman

- J P Guilford

- L. L. Thurstone

- Ruth Morley

- Aleene B. Nielson

- Judith A. Rogers

- H.J. Eysenck

- S. B. G. Eysenck

- Richard J. Herrnstein

- Charles Murray

- Steven Fraser

- Recruit researchers

- Join for free

- Login Email Tip: Most researchers use their institutional email address as their ResearchGate login Password Forgot password? Keep me logged in Log in or Continue with Google Welcome back! Please log in. Email · Hint Tip: Most researchers use their institutional email address as their ResearchGate login Password Forgot password? Keep me logged in Log in or Continue with Google No account? Sign up

- Games & Quizzes

- History & Society

- Science & Tech

- Biographies

- Animals & Nature

- Geography & Travel

- Arts & Culture

- On This Day

- One Good Fact

- New Articles

- Lifestyles & Social Issues

- Philosophy & Religion

- Politics, Law & Government

- World History

- Health & Medicine

- Browse Biographies

- Birds, Reptiles & Other Vertebrates

- Bugs, Mollusks & Other Invertebrates

- Environment

- Fossils & Geologic Time

- Entertainment & Pop Culture

- Sports & Recreation

- Visual Arts

- Demystified

- Image Galleries

- Infographics

- Top Questions

- Britannica Kids

- Saving Earth

- Space Next 50

- Student Center

- When did science begin?

- Where was science invented?

- What is human intelligence?

- Can human intelligence be measured?

multiple intelligences

Our editors will review what you’ve submitted and determine whether to revise the article.

- Verywell Mind - Gardner's Theory of Multiple Intelligences

- The University of Tennessee Health Science Center - Multiple Intelligence Theory

- Simply Psychology - Howard Gardner’s Theory of Multiple Intelligences

- Open Oregon Educational Resources - Theory of Multiple Intelligences

- Social Sci LibreTexts - What are the theories of multiple intelligences and emotional intelligence?

- National Center for Biotechnology Information - PubMed Central - Multiple Intelligences in Teaching and Education: Lessons Learned from Neuroscience

- Academia - Multiple Intelligences

- Frontiers - Why multiple intelligences theory is a neuromyth

multiple intelligences , theory of human intelligence first proposed by the psychologist Howard Gardner in his book Frames of Mind (1983). At its core, it is the proposition that individuals have the potential to develop a combination of eight separate intelligences, or spheres of intelligence; that proposition is grounded on Gardner’s assertion that an individual’s cognitive capacity cannot be represented adequately in a single measurement, such as an IQ score . Rather, because each person manifests varying levels of separate intelligences, a unique cognitive profile would be a better representation of individual strengths and weaknesses, according to this theory. It is important to note that, within this theory, every person possesses all intelligences to some degree.