- Technical Support

- Technical Papers

- Knowledge Base

- Question Library

Call our friendly, no-pressure support team.

Primary vs Secondary Research: Differences, Methods, Sources, and More

Table of Contents

Primary vs Secondary Research – What’s the Difference?

In the search for knowledge and data to inform decisions, researchers and analysts rely on a blend of research sources. These sources are broadly categorized into primary and secondary research, each serving unique purposes and offering different insights into the subject matter at hand. But what exactly sets them apart?

Primary research is the process of gathering fresh data directly from its source. This approach offers real-time insights and specific information tailored to specific objectives set by stakeholders. Examples include surveys , interviews, and observational studies.

Secondary research , on the other hand, involves the analysis of existing data, most often collected and presented by others. This type of research is invaluable for understanding broader trends, providing context, or validating hypotheses. Common sources include scholarly articles, industry reports, and data compilations.

The crux of the difference lies in the origin of the information: primary research yields firsthand data which can be tailored to a specific business question, whilst secondary research synthesizes what's already out there. In essence, primary research listens directly to the voice of the subject, whereas secondary research hears it secondhand .

When to Use Primary and Secondary Research

Selecting the appropriate research method is pivotal and should be aligned with your research objectives. The choice between primary and secondary research is not merely procedural but strategic, influencing the depth and breadth of insights you can uncover.

Primary research shines when you need up-to-date, specific information directly relevant to your study. It's the go-to for fresh insights, understanding consumer behavior, or testing new theories. Its bespoke nature makes it indispensable for tailoring questions to get the exact answers you need.

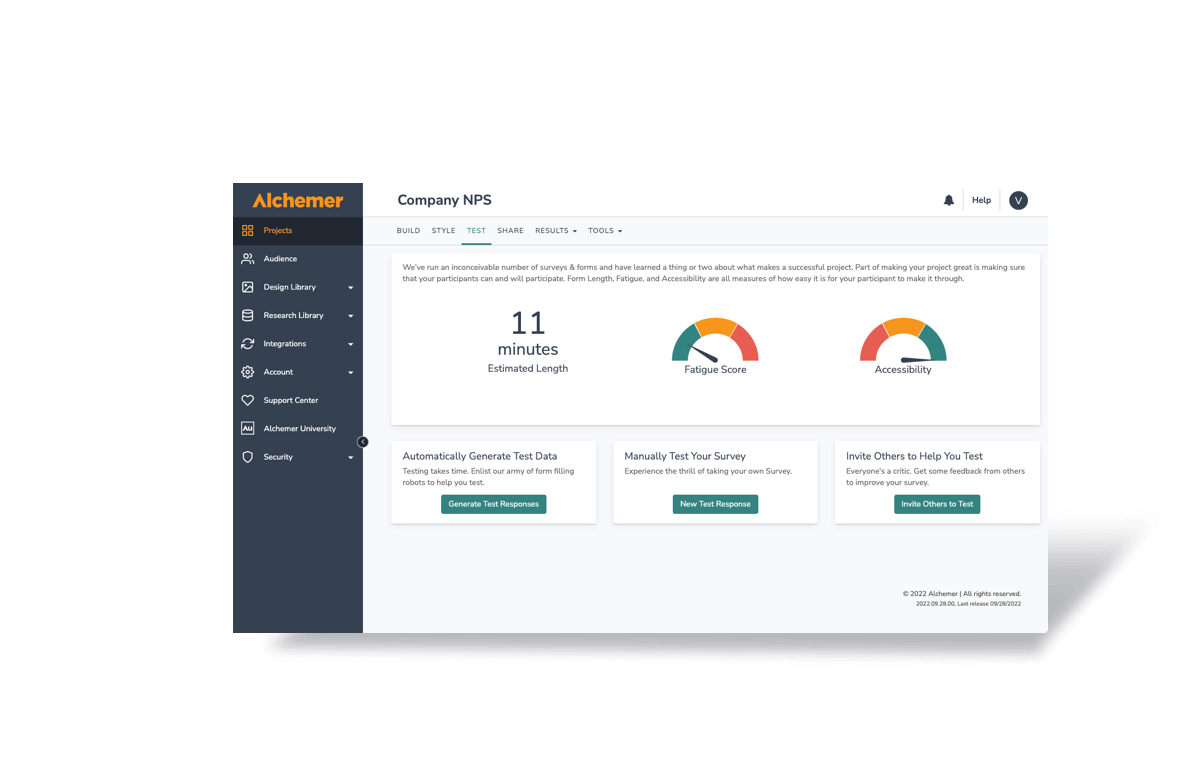

Ready to Start Gathering Primary Research Data?

Get started with our free survey research tool today! In just a few minutes, you can create powerful surveys with our easy-to-use interface.

Start Survey Research for Free or Request a Product Tour

Secondary research is your first step into the research world. It helps set the stage by offering a broad understanding of the topic. Before diving into costly primary research, secondary research can validate the need for further investigation or provide a solid background to build upon. It's especially useful for identifying trends, benchmarking, and situating your research within the existing body of knowledge.

Combining both methods can significantly enhance your research. Starting with secondary research lays the groundwork and narrows the focus, whilst subsequent primary research delves deep into specific areas of interest, providing a well-rounded, comprehensive understanding of the topic.

Primary vs Secondary Research Methods

In the landscape of market research, the methodologies employed can significantly influence the insights and conclusions drawn. Let's delve deeper into the various methods underpinning both primary and secondary research, shedding light on their unique applications and the distinct insights they offer.

Primary Research Methods:

- Surveys: Surveys are a cornerstone of primary research, offering a quantitative approach to gathering data directly from the target audience. By employing structured questionnaires, researchers can collect a vast array of data ranging from customer preferences to behavioral patterns. This method is particularly valuable for acquiring statistically significant data that can inform decision-making processes and strategy development. The application of statistical approaches for analysing this data, such as key drivers analysis, MaxDiff or conjoint analysis can also further enhance any collected data.

- One on One Interviews: Interviews provide a qualitative depth to primary research, allowing for a nuanced exploration of participants' attitudes, experiences, and motivations. Conducted either face-to-face or remotely, interviews enable researchers to delve into the complexities of human behavior, offering rich insights that surveys alone may not uncover. This method is instrumental in exploring new areas of research or obtaining detailed information on specific topics.

- Focus Groups: Focus groups bring together a small, diverse group of participants to discuss and provide feedback on a particular subject, product, or idea. This interactive setting fosters a dynamic exchange of ideas, revealing consumers' perceptions, experiences, and preferences. Focus groups are invaluable for testing concepts, exploring market trends, and understanding the factors that influence consumer decisions.

- Ethnographic Studies: Ethnographic studies involve the systematic watching, recording, and analysis of behaviors and events in their natural setting. This method offers an unobtrusive way to gather authentic data on how people interact with products, services, or environments, providing insights that can lead to more user-centered design and marketing strategies.

Secondary Research Methods:

- Literature Reviews: Literature reviews involve the comprehensive examination of existing research and publications on a given topic. This method enables researchers to synthesize findings from a range of sources, providing a broad understanding of what is already known about a subject and identifying gaps in current knowledge.

- Meta-Analysis: Meta-analysis is a statistical technique that combines the results of multiple studies to arrive at a comprehensive conclusion. This method is particularly useful in secondary research for aggregating findings across different studies, offering a more robust understanding of the evidence on a particular topic.

- Content Analysis: Content analysis is a method for systematically analyzing texts, media, or other content to quantify patterns, themes, or biases . This approach allows researchers to assess the presence of certain words, concepts, or sentiments within a body of work, providing insights into trends, representations, and societal norms. This can be performed across a range of sources including social media, customer forums or review sites.

- Historical Research: Historical research involves the study of past events, trends, and behaviors through the examination of relevant documents and records. This method can provide context and understanding of current trends and inform future predictions, offering a unique perspective that enriches secondary research.

Each of these methods, whether primary or secondary, plays a crucial role in the mosaic of market research, offering distinct pathways to uncovering the insights necessary to drive informed decisions and strategies.

Primary vs Secondary Sources in Research

Both primary and secondary sources of research form the backbone of the insight generation process, when both are utilized in tandem it can provide the perfect steppingstone for the generation of real insights. Let’s explore how each category serves its unique purpose in the research ecosystem.

Primary Research Data Sources

Primary research data sources are the lifeblood of firsthand research, providing raw, unfiltered insights directly from the source. These include:

- Customer Satisfaction Survey Results: Direct feedback from customers about their satisfaction with a product or service. This data is invaluable for identifying strengths to build on and areas for improvement and typically renews each month or quarter so that metrics can be tracked over time.

- NPS Rating Scores from Customers: Net Promoter Score (NPS) provides a straightforward metric to gauge customer loyalty and satisfaction. This quantitative data can reveal much about customer sentiment and the likelihood of referrals.

- Ad-hoc Surveys: Ad-hoc surveys can be about any topic which requires investigation, they are typically one off surveys which zero in on one particular business objective. Ad-hoc projects are useful for situations such as investigating issues identified in other tracking surveys, new product development, ad testing, brand messaging, and many other kinds of projects.

- A Field Researcher’s Notes: Detailed observations from fieldwork can offer nuanced insights into user behaviors, interactions, and environmental factors that influence those interactions. These notes are a goldmine for understanding the context and complexities of user experiences.

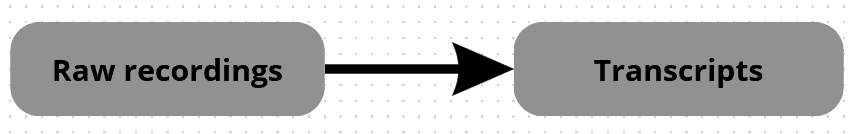

- Recordings Made During Focus Groups: Audio or video recordings of focus group discussions capture the dynamics of conversation, including reactions, emotions, and the interplay of ideas. Analyzing these recordings can uncover nuanced consumer attitudes and perceptions that might not be evident in survey data alone.

These primary data sources are characterized by their immediacy and specificity, offering a direct line to the subject of study. They enable researchers to gather data that is specifically tailored to their research objectives, providing a solid foundation for insightful analysis and strategic decision-making.

Secondary Research Data Sources

In contrast, secondary research data sources offer a broader perspective, compiling and synthesizing information from various origins. These sources include:

- Books, Magazines, Scholarly Journals: Published works provide comprehensive overviews, detailed analyses, and theoretical frameworks that can inform research topics, offering depth and context that enriches primary data.

- Market Research Reports: These reports aggregate data and analyses on industry trends, consumer behavior, and market dynamics, providing a macro-level view that can guide primary research directions and validate findings.

- Government Reports: Official statistics and reports from government agencies offer authoritative data on a wide range of topics, from economic indicators to demographic trends, providing a reliable basis for secondary analysis.

- White Papers, Private Company Data: White papers and reports from businesses and consultancies offer insights into industry-specific research, best practices, and market analyses. These sources can be invaluable for understanding the competitive landscape and identifying emerging trends.

Secondary data sources serve as a compass, guiding researchers through the vast landscape of information to identify relevant trends, benchmark against existing data, and build upon the foundation of existing knowledge. They can significantly expedite the research process by leveraging the collective wisdom and research efforts of others.

By adeptly navigating both primary and secondary sources, researchers can construct a well-rounded research project that combines the depth of firsthand data with the breadth of existing knowledge. This holistic approach ensures a comprehensive understanding of the research topic, fostering informed decisions and strategic insights.

Examples of Primary and Secondary Research in Marketing

In the realm of marketing, both primary and secondary research methods play critical roles in understanding market dynamics, consumer behavior, and competitive landscapes. By comparing examples across both methodologies, we can appreciate their unique contributions to strategic decision-making.

Example 1: New Product Development

Primary Research: Direct Consumer Feedback through Surveys and Focus Groups

- Objective: To gauge consumer interest in a new product concept and identify preferred features.

- Process: Surveys distributed to a target demographic to collect quantitative data on consumer preferences, and focus groups conducted to dive deeper into consumer attitudes and desires.

- Insights: Direct insights into consumer needs, preferences for specific features, and willingness to pay. These insights help in refining product design and developing a targeted marketing strategy.

Secondary Research: Market Analysis Reports

- Objective: To understand the existing market landscape, including competitor products and market trends.

- Process: Analyzing published market analysis reports and industry studies to gather data on market size, growth trends, and competitive offerings.

- Insights: Provides a broader understanding of the market, helping to position the new product strategically against competitors and align it with current trends.

Example 2: Brand Positioning

Primary Research: Brand Perception Analysis through Surveys

- Objective: To understand how the brand is perceived by consumers and identify potential areas for repositioning.

- Process: Conducting surveys that ask consumers to describe the brand in their own words, rate it against various attributes, and compare it to competitors.

- Insights: Direct feedback on brand strengths and weaknesses from the consumer's perspective, offering actionable data for adjusting brand messaging and positioning.

Secondary Research: Social Media Sentiment Analysis

- Objective: To analyze public sentiment towards the brand and its competitors.

- Process: Utilizing software tools to analyze mentions, hashtags, and discussions related to the brand and its competitors across social media platforms.

- Insights: Offers an overview of public perception and emerging trends in consumer sentiment, which can validate findings from primary research or highlight areas needing further investigation.

Example 3: Market Expansion Strategy

Primary Research: Consumer Demand Studies in New Markets

- Objective: To assess demand and consumer preferences in a new geographic market.

- Process: Conducting surveys and interviews with potential consumers in the target market to understand their needs, preferences, and cultural nuances.

- Insights: Provides specific insights into the new market’s consumer behavior, preferences, and potential barriers to entry, guiding market entry strategies.

Secondary Research: Economic and Demographic Analysis

- Objective: To evaluate the economic viability and demographic appeal of the new market.

- Process: Reviewing existing economic reports, demographic data, and industry trends relevant to the target market.

- Insights: Offers a macro view of the market's potential, including economic conditions, demographic trends, and consumer spending patterns, which can complement insights gained from primary research.

By leveraging both primary and secondary research, marketers can form a comprehensive understanding of their market, consumers, and competitors, facilitating informed decision-making and strategic planning. Each method brings its strengths to the table, with primary research offering direct consumer insights and secondary research providing a broader context within which to interpret those insights.

What Are the Pros and Cons of Primary and Secondary Research?

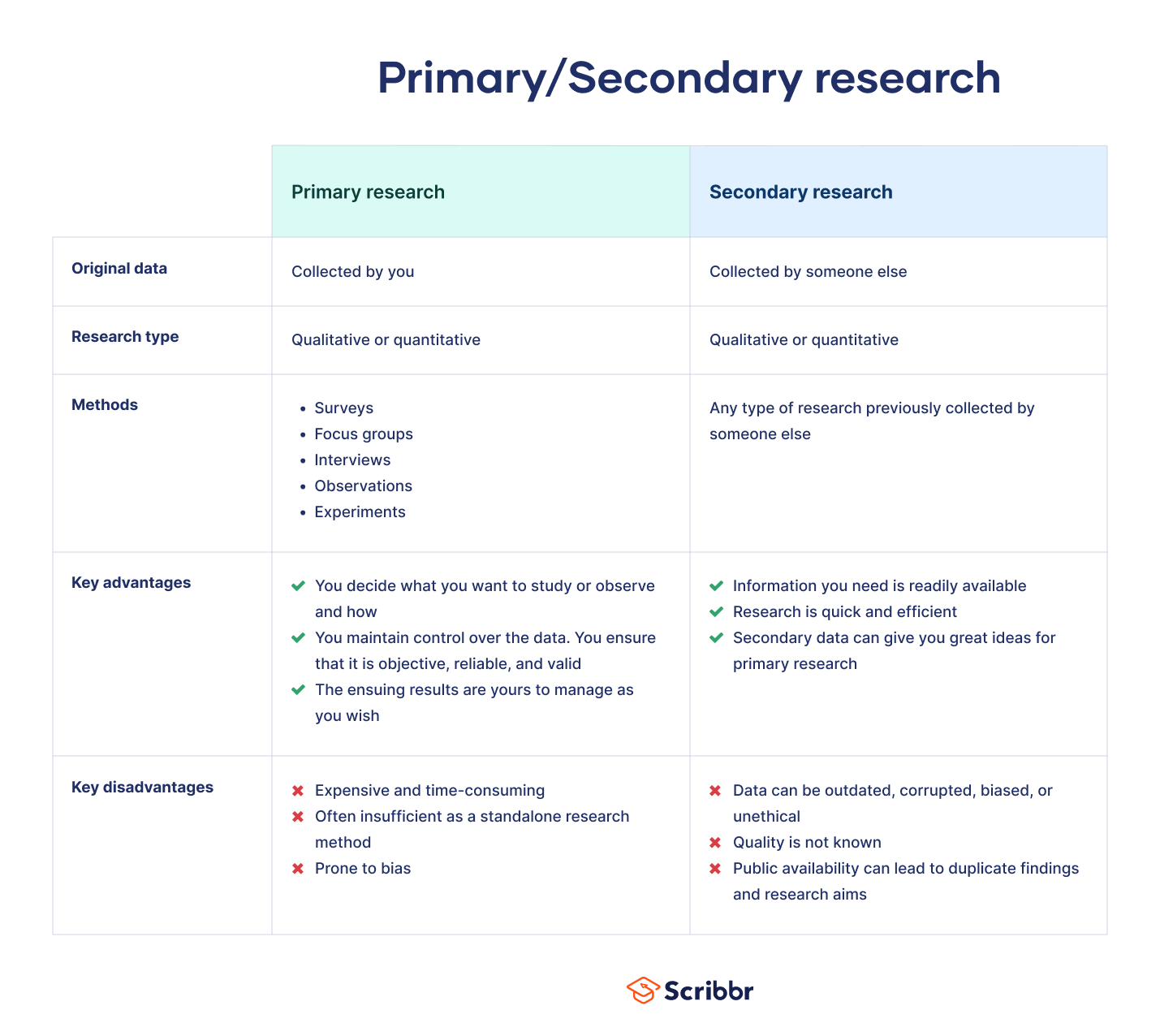

When it comes to market research, both primary and secondary research offer unique advantages and face certain limitations. Understanding these can help researchers and businesses make informed decisions on which approach to utilize for their specific needs. Below is a comparative table highlighting the pros and cons of each research type.

|

|

|

|

|

| - Tailored to specific research needs | - Cost-effective as it utilizes existing data |

| - Offers recent and relevant data | - Provides a broad overview, ideal for initial understanding | |

| - Allows for direct engagement with respondents, offering deeper insights | - Quick access to data, saving time on collection | |

| - Greater control over data quality and methodology | - Can cover a wide range of topics and historical data | |

|

| - Time-consuming and often more expensive due to data collection and analysis | - May not be entirely relevant or specific to current research needs |

| - Requires significant resources for design, implementation, and analysis | - Quality and accuracy of data can vary, depending on the source | |

| - Risk of biased data if not properly designed and executed | - Limited control over data quality and collection methodology | |

| - May be challenging to reach a representative sample for niche markets | - Existing data may not be as current, impacting its applicability |

Navigating the Pros and Cons

- Balance Your Research Needs: Consider starting with secondary research to gain a broad understanding of the subject matter, then delve into primary research for specific, targeted insights that are tailored to your precise needs.

- Resource Allocation: Evaluate your budget, time, and resource availability. Primary research can offer more specific and actionable data but requires more resources. Secondary research is more accessible but may lack the specificity or recency you need.

- Quality and Relevance: Assess the quality and relevance of available secondary sources before deciding if primary research is necessary. Sometimes, the existing data might suffice, especially for preliminary market understanding or trend analysis.

- Combining Both for Comprehensive Insights: Often, the most effective research strategy involves a combination of both primary and secondary research. This approach allows for a more comprehensive understanding of the market, leveraging the broad perspective provided by secondary sources and the depth and specificity of primary data.

Free Survey Maker Tool

Get access to our free and intuitive survey maker. In just a few minutes, you can create powerful surveys with its easy-to-use interface.

Try our Free Survey Maker or Request a Product Tour

Sawtooth Software

3210 N Canyon Rd Ste 202

Provo UT 84604-6508

United States of America

Support: [email protected]

Consulting: [email protected]

Sales: [email protected]

Products & Services

Support & Resources

Have a language expert improve your writing

Run a free plagiarism check in 10 minutes, generate accurate citations for free.

- Knowledge Base

Methodology

- What is Secondary Research? | Definition, Types, & Examples

What is Secondary Research? | Definition, Types, & Examples

Published on January 20, 2023 by Tegan George . Revised on January 12, 2024.

Secondary research is a research method that uses data that was collected by someone else. In other words, whenever you conduct research using data that already exists, you are conducting secondary research. On the other hand, any type of research that you undertake yourself is called primary research .

Secondary research can be qualitative or quantitative in nature. It often uses data gathered from published peer-reviewed papers, meta-analyses, or government or private sector databases and datasets.

Table of contents

When to use secondary research, types of secondary research, examples of secondary research, advantages and disadvantages of secondary research, other interesting articles, frequently asked questions.

Secondary research is a very common research method, used in lieu of collecting your own primary data. It is often used in research designs or as a way to start your research process if you plan to conduct primary research later on.

Since it is often inexpensive or free to access, secondary research is a low-stakes way to determine if further primary research is needed, as gaps in secondary research are a strong indication that primary research is necessary. For this reason, while secondary research can theoretically be exploratory or explanatory in nature, it is usually explanatory: aiming to explain the causes and consequences of a well-defined problem.

Prevent plagiarism. Run a free check.

Secondary research can take many forms, but the most common types are:

Statistical analysis

Literature reviews, case studies, content analysis.

There is ample data available online from a variety of sources, often in the form of datasets. These datasets are often open-source or downloadable at a low cost, and are ideal for conducting statistical analyses such as hypothesis testing or regression analysis .

Credible sources for existing data include:

- The government

- Government agencies

- Non-governmental organizations

- Educational institutions

- Businesses or consultancies

- Libraries or archives

- Newspapers, academic journals, or magazines

A literature review is a survey of preexisting scholarly sources on your topic. It provides an overview of current knowledge, allowing you to identify relevant themes, debates, and gaps in the research you analyze. You can later apply these to your own work, or use them as a jumping-off point to conduct primary research of your own.

Structured much like a regular academic paper (with a clear introduction, body, and conclusion), a literature review is a great way to evaluate the current state of research and demonstrate your knowledge of the scholarly debates around your topic.

A case study is a detailed study of a specific subject. It is usually qualitative in nature and can focus on a person, group, place, event, organization, or phenomenon. A case study is a great way to utilize existing research to gain concrete, contextual, and in-depth knowledge about your real-world subject.

You can choose to focus on just one complex case, exploring a single subject in great detail, or examine multiple cases if you’d prefer to compare different aspects of your topic. Preexisting interviews , observational studies , or other sources of primary data make for great case studies.

Content analysis is a research method that studies patterns in recorded communication by utilizing existing texts. It can be either quantitative or qualitative in nature, depending on whether you choose to analyze countable or measurable patterns, or more interpretive ones. Content analysis is popular in communication studies, but it is also widely used in historical analysis, anthropology, and psychology to make more semantic qualitative inferences.

Secondary research is a broad research approach that can be pursued any way you’d like. Here are a few examples of different ways you can use secondary research to explore your research topic .

Secondary research is a very common research approach, but has distinct advantages and disadvantages.

Advantages of secondary research

Advantages include:

- Secondary data is very easy to source and readily available .

- It is also often free or accessible through your educational institution’s library or network, making it much cheaper to conduct than primary research .

- As you are relying on research that already exists, conducting secondary research is much less time consuming than primary research. Since your timeline is so much shorter, your research can be ready to publish sooner.

- Using data from others allows you to show reproducibility and replicability , bolstering prior research and situating your own work within your field.

Disadvantages of secondary research

Disadvantages include:

- Ease of access does not signify credibility . It’s important to be aware that secondary research is not always reliable , and can often be out of date. It’s critical to analyze any data you’re thinking of using prior to getting started, using a method like the CRAAP test .

- Secondary research often relies on primary research already conducted. If this original research is biased in any way, those research biases could creep into the secondary results.

Many researchers using the same secondary research to form similar conclusions can also take away from the uniqueness and reliability of your research. Many datasets become “kitchen-sink” models, where too many variables are added in an attempt to draw increasingly niche conclusions from overused data . Data cleansing may be necessary to test the quality of the research.

Receive feedback on language, structure, and formatting

Professional editors proofread and edit your paper by focusing on:

- Academic style

- Vague sentences

- Style consistency

See an example

If you want to know more about statistics , methodology , or research bias , make sure to check out some of our other articles with explanations and examples.

- Normal distribution

- Degrees of freedom

- Null hypothesis

- Discourse analysis

- Control groups

- Mixed methods research

- Non-probability sampling

- Quantitative research

- Inclusion and exclusion criteria

Research bias

- Rosenthal effect

- Implicit bias

- Cognitive bias

- Selection bias

- Negativity bias

- Status quo bias

A systematic review is secondary research because it uses existing research. You don’t collect new data yourself.

The research methods you use depend on the type of data you need to answer your research question .

- If you want to measure something or test a hypothesis , use quantitative methods . If you want to explore ideas, thoughts and meanings, use qualitative methods .

- If you want to analyze a large amount of readily-available data, use secondary data. If you want data specific to your purposes with control over how it is generated, collect primary data.

- If you want to establish cause-and-effect relationships between variables , use experimental methods. If you want to understand the characteristics of a research subject, use descriptive methods.

Quantitative research deals with numbers and statistics, while qualitative research deals with words and meanings.

Quantitative methods allow you to systematically measure variables and test hypotheses . Qualitative methods allow you to explore concepts and experiences in more detail.

Sources in this article

We strongly encourage students to use sources in their work. You can cite our article (APA Style) or take a deep dive into the articles below.

George, T. (2024, January 12). What is Secondary Research? | Definition, Types, & Examples. Scribbr. Retrieved June 24, 2024, from https://www.scribbr.com/methodology/secondary-research/

Largan, C., & Morris, T. M. (2019). Qualitative Secondary Research: A Step-By-Step Guide (1st ed.). SAGE Publications Ltd.

Peloquin, D., DiMaio, M., Bierer, B., & Barnes, M. (2020). Disruptive and avoidable: GDPR challenges to secondary research uses of data. European Journal of Human Genetics , 28 (6), 697–705. https://doi.org/10.1038/s41431-020-0596-x

Is this article helpful?

Tegan George

Other students also liked, primary research | definition, types, & examples, how to write a literature review | guide, examples, & templates, what is a case study | definition, examples & methods, get unlimited documents corrected.

✔ Free APA citation check included ✔ Unlimited document corrections ✔ Specialized in correcting academic texts

Primary Research vs Secondary Research: A Comparative Analysis

Understand the differences between primary research vs secondary research. Learn how they can be used to generate valuable insights.

Primary research and secondary research are two fundamental approaches used in research studies to gather information and explore topics of interest. Both primary and secondary research offer unique advantages and have their own set of considerations, making them valuable tools for researchers in different contexts.

Understanding the distinctions between primary and secondary research is crucial for researchers to make informed decisions about the most suitable approach for their study objectives and available resources.

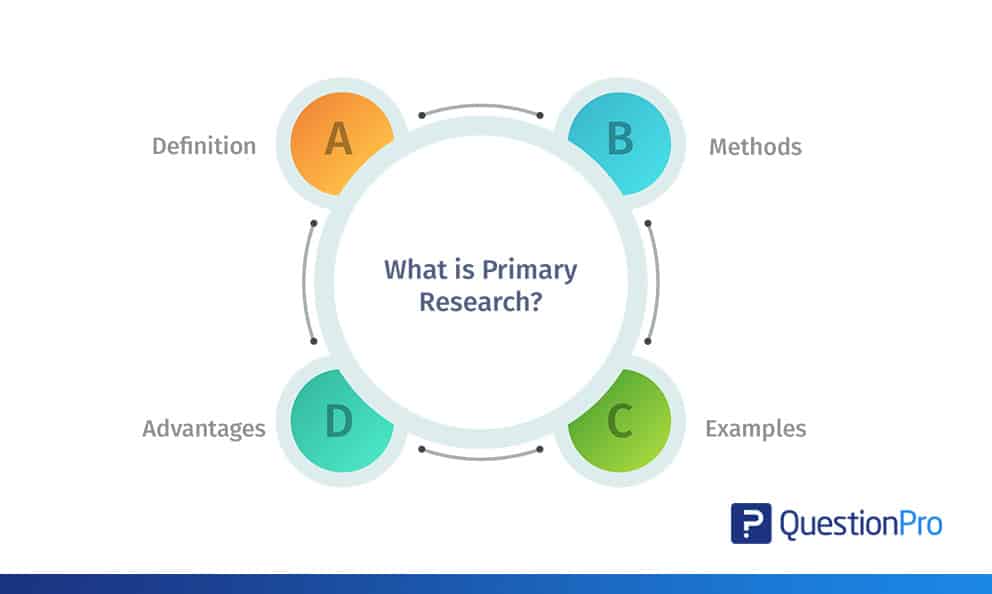

What is Primary Research?

Primary research refers to the collection and analysis of data directly from original sources. It involves gathering information directly to address specific research objectives and generate new insights. This research method conducts surveys, interviews, observations, experiments, or focus groups to obtain data that is relevant to the research question at hand. By engaging directly with subjects or sources, primary research provides firsthand and up-to-date information, allowing researchers to have control over the data collection process and adjust it to their specific needs.

Types of Primary Research

There are several types of primary research methods commonly used in various fields:

Surveys are the systematic collection of data through questionnaires or interviews, aiming to gather information from a large number of participants. Surveys can be conducted in person, over the phone, through mail, or online.

Interviews entail direct one-on-one or group interactions with individuals or key informants to obtain detailed information about their experiences, opinions, or expertise. Interviews can be structured (using predetermined questions) or unstructured (allowing for open-ended discussions).

Observations

Observational research carefully observes and documents behaviors, interactions, or phenomena in real-life settings. It can be done in a participant or non-participant manner, depending on the level of involvement of the researcher.

Data analysis

Examining and interpreting collected data, data analysis uncovers patterns, trends, and insights, providing a deeper understanding of the research topic. It enables drawing meaningful conclusions for decision-making and guides further research.

Focus groups

Focus groups facilitated group discussions with a small number of participants who shared their opinions, attitudes, and experiences on a specific topic. This method allows for interactive and in-depth exploration of a subject.

Benefits of Primary Research

Original and specific data: Primary research provides first hand data directly relevant to the research objectives, ensuring its freshness and specificity to the research context.

Control over data collection: Researchers have control over the design, implementation, and data collection process, allowing them to adapt the research methods and instruments to suit their needs.

Depth of understanding: Primary research methods, such as interviews and focus groups, enable researchers to gain a deep understanding of participants’ perspectives, experiences, and motivations.

Validity and reliability: By directly collecting data from original sources, primary research enhances the validity and reliability of the findings, reducing potential biases associated with using secondary or existing data.

Challenges of Primary Research

Time and Resource-intensive: Primary research requires careful planning, data collection, analysis, and interpretation. It may require recruiting participants, conducting interviews or surveys, and analyzing data, all of which require time and resources.

Sampling limitations: Primary research often relies on sampling techniques to select participants. Ensuring a representative sample that accurately reflects the target population can be challenging, and sampling biases may affect the generalizability of the findings.

Subjectivity: The involvement of researchers in primary research methods, such as interviews or observations, introduces the potential for subjective interpretations or biases that can influence the data collection and analysis process.

Limited generalizability: Findings from primary research may have limited generalizability due to the specific characteristics of the sample or context. It is essential to acknowledge the scope and limitations of the findings and avoid making broad generalizations beyond the studied sample or context.

What is Secondary Research?

It is a method of research that relies on data that is readily available, rather than gathering new data through primary research methods. Secondary research relies on reviewing and analyzing sources such as published studies, reports, articles, books, government databases, and online resources to extract relevant information for a specific research objective.

Sources of Secondary Research

Published studies and academic journals.

Researchers can review published studies and academic journals to gather information, data, and findings related to their research topic. These sources often provide comprehensive and in-depth analyses of specific subjects.

Reports and white papers

Reports and white papers produced by research organizations, government agencies, and industry associations provide valuable data and insights on specific topics or sectors. These documents often contain statistical data, market research, trends, and expert opinions.

Books and reference materials

Books and reference materials written by experts in a particular field can offer comprehensive overviews, theories, and historical perspectives that contribute to secondary research.

Online databases

Online databases, such as academic libraries, research repositories, and specialized platforms, provide access to a vast array of published research articles, theses, dissertations, and conference proceedings.

Benefits of Secondary Research

Time and Cost-effectiveness: Secondary research saves time and resources since the data and information already exist and are readily accessible. Researchers can utilize existing resources instead of conducting time-consuming primary research.

Wide range of data: Secondary research provides access to a wide range of data sources, including large-scale surveys, census data, and comprehensive reports. This allows researchers to explore diverse perspectives and make comparisons across different studies.

Comparative analyses: Researchers can compare findings from different studies or datasets, allowing for cross-referencing and verification of results. This enhances the robustness and validity of research outcomes.

Ethical considerations: Secondary research does not involve direct interaction with participants, which reduces ethical concerns related to privacy, informed consent, and confidentiality.

Challenges of Secondary Research

Data availability and quality: The availability and quality of secondary data can vary. Researchers must critically evaluate the credibility, reliability, and relevance of the sources to ensure the accuracy of the information used in their research.

Limited control over data: Researchers have limited control over the design, collection methods, and variables included in the secondary data. The data may not perfectly align with the research objectives, requiring careful selection and analysis.

Potential bias and outdated information: Secondary data may contain inherent biases or limitations introduced by the original researchers. Additionally, the data may become outdated, and newer information or developments may not be captured.

Lack of customization: Since secondary data is collected for various purposes, it may not perfectly align with the specific research needs. Researchers may encounter limitations in terms of variables, definitions, or granularity of data.

Comparing Primary and Secondary Research

Primary research vs secondary research.

| Data collection directly from original sources. | Utilizes existing data and information. |

| Involves gathering new and firsthand data. | Relies on pre-existing data collected by others. |

| Time-consuming and resource-intensive. | Time-efficient and cost-effective. |

| Provides unique insights specific to the research objective. | Offers broader context and generalizable findings. |

| Can be tailored to specific research questions. | Covers a wide range of topics and research areas. |

| Enables direct interaction with participants or subjects. | Does not have direct contact with participants. |

| Offers flexibility in study design and methodology. | Limited control over data quality and collection methods. |

| Higher control over data reliability and validity. | Relies on the quality and credibility of the selected sources. |

| Allows for in-depth exploration of research questions. | Supports hypothesis testing and comparative analysis. |

| Requires ethical considerations for participant involvement. | Ethics demand proper citation and adherence to copyright laws. |

Examples of Primary and Secondary Research

Examples of primary research.

- Conducting a survey to collect data on customer satisfaction and preferences for a new product directly from the target audience.

- Designing and conducting an experiment to test the effectiveness of a new teaching method by comparing the learning outcomes of students in different groups.

- Observing and documenting the behavior of a specific animal species in its natural habitat to gather data for ecological research.

- Organizing a focus group with potential consumers to gather insights and feedback on a new advertising campaign.

- Conducting interviews with healthcare professionals to understand their experiences and perspectives on a specific medical treatment.

Examples of Secondary Research

- Accessing a market research report to gather information on consumer trends, market size, and competitor analysis in the smartphone industry.

- Using existing government data on unemployment rates to analyze the impact of economic policies on employment patterns.

- Examining historical records and letters to understand the political climate and social conditions during a particular historical event.

- Conducting a meta-analysis of published studies on the effectiveness of a specific medication to assess its overall efficacy and safety.

How to Use Primary and Secondary Research Together

Having explored the distinction between primary research vs secondary research, the integration of these two approaches becomes a crucial consideration. By incorporating primary and secondary research, a comprehensive and well-informed research methodology can be achieved. The utilization of secondary research provides researchers with a broader understanding of the subject, allowing them to identify gaps in knowledge and refine their research questions properly.

Primary research methods, such as surveys or interviews, can then be employed to collect new data that directly address these research questions. The findings from primary research can be compared and validated against the existing knowledge obtained through secondary research. By combining the insights from both types of research, researchers can fill knowledge gaps, strengthen the reliability of their findings through triangulation, and draw meaningful conclusions that contribute to the overall understanding of the subject matter.

Ethical Considerations for Primary and Secondary Research

In primary research, researchers must obtain informed consent from participants, ensuring they are fully aware of the study’s purpose, procedures, and any potential risks or benefits involved. Confidentiality and anonymity should be maintained to safeguard participants’ privacy. Researchers should also ensure that the data collection methods and research design are conducted in an ethical manner, adhering to ethical guidelines and standards set by relevant institutional review boards or ethics committees.

In secondary research, ethical considerations primarily revolve around the proper and responsible use of existing data sources. Researchers should respect copyright laws and intellectual property rights when accessing and using secondary data. They should also critically evaluate the credibility and reliability of the sources to ensure the validity of the data used in their research. Proper citation and acknowledgment of the original sources are essential to maintain academic integrity and avoid plagiarism.

Make Scientifically Accurate Infographics In Minutes

Mind the Graph offers a wide range of pre-designed elements, such as icons, illustrations, graphs, and diagrams, specifically designed for various scientific disciplines. Mind the Graph allows scientists to enhance the visual representation of their research, making it more engaging and accessible to peers, students, policymakers, and the general public.

Subscribe to our newsletter

Exclusive high quality content about effective visual communication in science.

Content tags

An official website of the United States government

The .gov means it’s official. Federal government websites often end in .gov or .mil. Before sharing sensitive information, make sure you’re on a federal government site.

The site is secure. The https:// ensures that you are connecting to the official website and that any information you provide is encrypted and transmitted securely.

- Publications

- Account settings

Preview improvements coming to the PMC website in October 2024. Learn More or Try it out now .

- Advanced Search

- Journal List

- Afr J Emerg Med

- v.10(Suppl 2); 2020

Acquiring data in medical research: A research primer for low- and middle-income countries

Vicken totten.

a Kaweah Delta Health Care District (KDHCD), KDHCD Department of Emergency Medicine, Visalia, CA, USA

Erin L. Simon

b Cleveland Clinic Akron General, Department of Emergency Medicine, Akron, OH, USA

Mohammad Jalili

c Department of Emergency Medicine, Tehran University of Medical Sciences, Tehran, Iran

Hendry R. Sawe

d Muhimbili University of Health and Allied Sciences, Dar es Salaam, Tanzania

Without data, there is no new knowledge generated. There may be interesting speculation, new paradigms or theories, but without data gathered from the universe, as representative of the truth in the universe as possible, there will be no new knowledge. Therefore, it is important to become excellent at collecting, collating and correctly interpreting data. Pre-existing and new data sources are discussed; variables are discussed, and sampling methods are covered. The importance of a detailed protocol and research manual are emphasized. Data collectors and data collection forms, both electronic and paper-based are discussed. Ensuring subject privacy while also ensuring appropriate data retention must be balanced.

African relevance

- • To get good quality information you first need good quality data

- • Data collection systematically and reproducibly gathers and measures variables to answer research questions.

- • Good data is a result of a well thought out study protocol

The International Federation for Emergency Medicine global health research primer

This paper forms part 9 of a series of ‘how to’ papers, commissioned by the International Federation for Emergency Medicine. It describes data sources, variables, sampling methods, data collection and the value of a clear data protocol. We have also included additional tips and pitfalls that are relevant to emergency medicine researchers.

Data collection is the process of systematically and reproducibly gathering and measuring variables in order to answer research questions, test hypotheses, or evaluate outcomes.

Data is not information. To get good quality information you first need good quality data, then you must curate, analyse and interpret it. Data is comprised of variables. Data collection begins with determining which variables are required, followed by the selection of a sample from a certain population. After that, a data collection tool is used to collect the variables from the selected sample, which is then converted into a data spreadsheet or database. The analysis is done on the database.

Sometimes you gather data yourself. Sometimes you analyse data others collected for different purposes. Ideally, you collect a universal sample, that is, 100%. In real life, you get a limited sample. Preferably, it will be a truly random sample with enough power to answer your question. Unfortunately, you may have to settle for consecutive or convenience sampling. Ideally, your data collectors would be blinded to the outcome of interest, to prevent bias. However, real life is full of biases. Imperfect data may be better than no data; you can often get useful information from imperfect data. Remember the enemy of good is perfect.

Why is good data important?

Acquiring data is the most important step in a research study. The best design with bad data is useless. Bad design produces bad data. The most sophisticated analysis cannot be performed without data; analysing bad data produces erroneous results. Analysis can never be better than the quality of the data on which it was run. Good data has integrity. Data integrity is paramount to learning “Truth in the Universe”. Good data is as complete and as clean, as you can reasonably make it. Clean data ‘has integrity’ when the variables access as much relevant information as possible, and in the same way for each subject.

Some information is very hard to get. You may have to use proxy variables for what you really want to know. A proxy variable is a variable that is not in itself directly relevant, but that serves in place of an unobservable or immeasurable variable. In order for a variable to be a good proxy, it must have a close correlation, not necessarily linear, with the variable of interest. One example for the variable of a specific illness might be a medication list.

Consequences of bad data include an inability to answer the research question; inability to replicate or validate the study; distorted findings and wasted resources; compromised knowledge and even harm to subjects.

Ensure data quality

Good data is a result of a well-thought-out study protocol, which is the written plan for the study. Good planning is the most cost-effective way to ensure data integrity. Good planning is documented by a thorough and detailed protocol, with a comprehensive procedures manual. Poorly written manuals risk incomplete or inconsistent collection of data, in other words, ‘bad data’. The manual should include rigorous, step-by-step instructions on how to administer tests or collect the data. It should cover the ‘who’ (the subject and the researcher); the ‘when’ (the timing), the ‘how’ (methods), and the ‘what’ (a complete listing of variables to be collected). There should also be an identified mechanism to document any changes in procedures that may evolve over the course of the investigation. The study design should be reproducible: so that the protocol can be followed by any other researcher. All data needs to be gathered in the same way. Test (trial-run) your manual before you start your study. If data is collected by several people, make sure there is a sufficient degree of inter-rater reliability.

To get good data, your sample needs to be representative of the population. For others to apply your results, you need to characterize your population, so others can decide if your conclusions are relevant to their population (see Sampling section, below).

Data integrity demands you supervise your study, making sure it is complete and accurate. You may wish to do interim analyses. Keep copies! Keep both the raw data and the data sheets, for the length of time required by law or by Good Research Practice in your country. This will protect you from accusations of falsification of data.

In real life, you may have to deal with any number of sampling and data collection biases. Some of these biases can be measured statistically. Regardless, all the limitations you can think of should be written in your limitations section. The best design you can practically use gives you the best data you can reasonably get. Remember, “you cannot fix with statistics what you fouled up by design.”

Before you acquire your first datum, consider: Do you have a developed protocol and a research manual? Have you sought Ethics Board approval? Do you have an informed consent? Do you have a plan to protect the subject's confidentiality? Do you have a plan for data analysis? Where will you safely store and protect the data? If you have collaborators, have you established, in writing, who owns the data, and who has the right to analyse and publish it?

Types of data: qualitative vs. quantitative data

Numerical data is generally called quantitative; if in words or sentences, it is qualitative. Medical research historically has focused on quantitative methods. Generally, quantitative research is cheaper, easier to gather and easier to analyse. For purposes of this chapter, we will focus on quantitative research.

Qualitative research is about words, sentences, sounds, feeling, emotions, colours and other elements that are non-quantifiable. It requires human intellect to extract themes from the sentences, evaluate the fit of the data to the themes, and to draw the implications of the themes. Primary sources for qualitative data include open ended surveys, interviews, and public meetings. Qualitative research is more common in politics and the social sciences, and will not be further discussed here, except to refer you to other sources.

Quantitative research can include questionnaires with closed-ended questions (open ended questions belong in qualitative research). The data is transformed into numbers and will be analysed with parametric and non-parametric statistical tests. In general, you will derive a mean, mode and median; you will calculate probabilities, make correlation and regressions in order to draw conclusions.

Sources of data: primary vs secondary data

To answer a research question, there are many potential sources of data. Two main categories are primary data and secondary data. Primary data is newly collected data; it can be gathered directly from people's responses (surveys), or from their biometrics (blood pressure, weight, blood tests, etc.). It is still considered primary data if you gather data that was collected for other (medical) purposes by extracting the data from medical records. Medical records can be a rich source of data, but data extraction by hand takes a lot of time.

Secondary data already exists; it has already been published or complied. There are extant local, regional, national and international databases such as Trauma Registries, Disease-specific Registries, Public Health Data, government statistics, and World Health Organization data. Locally, your hospital or clinic may already keep statistics on any number of topics. Combining information from disparate databases may sometimes yield interesting results. For example, in the US, the Centers for Disease Control and Prevention keeps databases of reportable diseases, accidents, causes of death and much more. The US Geographic Survey reports the average elevation of American cities. Combining the two databases revealed that, even when gun ownership, drug and alcohol use were statistically controlled for, there was a linear correlation between altitude and suicide rates [ 2 ]. Reno et al., reviewed the existing medical literature (also secondary data), and confirmed the correlation and concluded that the mechanisms have yet to be elucidated [ 3 ].

Collecting good data is often the hardest part of research. Ideally, you would want to collect 100% of the data (universal sampling to reflect target population). One example would be ‘all elderly persons with gout’. In real life, you have access to only a subset of the target population (the accessible population). Further, in your study you will be limited to a subset of the accessible population (the study population). Again, in the ideal world, that limited sample would be truly random, and have enough power to answer your question. You can find free random number generators online. In real life, you may have to settle for consecutive or convenience sampling. Of the two, consecutive sampling has less bias. Sometimes it is important to balance your groups. You may have 2 or 3 treatments (or interventions) and want to have an equal number of each kind. So, you create blocks — of a few times the number of treatments. You randomized within the block. Each time a block is filled, you are assured that you have the right balance of subjects. Blocks are often in groups of six, eight or 12. This is called balanced allocation .

If you must get only a convenience sample – for example because you only have a single data gatherer and can get data only when that person is available – you should, at a minimum, try to get some simple demographics from times when the data gatherer is not available, to see if subjects at that other time are systematically different. For example, if you are looking at injuries, people who are injured when drinking on a Friday night might be systematically different from people who are injured on their way to work on a Monday morning. If you can only collect injury data in the morning, your results will be biased.

Variables are the bits of data you collect. They change from subject to subject and describe the subject numerically. Age (or year of birth); gender; ethnic group or tribe; and geographic location are commonly called simple demographic variables and should be collected and reported for most populations.

Continuous variables are quantified on a continuous scale, such as body weight. Discrete variables use a scale whose units are limited to integers (such as the number of cigarettes smoked per day). Discrete variables have a number of possible values and can resemble continuous variables in statistical analysis and be equivalent for the purpose of designing measurements. A good general rule is to prefer continuous variables because the information they contain provides additional information and improves statistical efficiency (more study power and smaller sample size).

Categorical variables are those not suitable for quantification. They are often measured by classifying them into categories. If there are two possible values (dead or alive), they are dichotomous. If there are more than two categories, they can be classified according to the type of information they provide (polytomous).

Research variables are either predictor (independent) or outcome (dependent) variables. The predictor variables might include such things as “Diabetes, Yes/No”, “Age over 65 — Yes/No”, and “diagnosis of hypertension” (again, Yes/No). The respective outcome might be “lower limb amputation” or “death within 10 years”. Your question might have been, “How much additional risk of amputation does a diagnosis of hypertension add in a person with diabetes?”

Before analysis, variables are coded into numbers and entered into a database. Your Research Manual should describe how to code all the data. When the variables are binary, (male/female; alive/dead) coding them into “0” and “1” makes analysing the data much easier (“1” versus “2” makes it harder). The easiest variables for computers to analyse are binary. In other words, “0” or “1”. Such variables are Yes/No; True/False; Male/Female; 65 or over / under 65, etc. The next easiest are ordinal integers: 1, 2, 3, etc. You might create ordinal numbers from categories (0–9; 10–19; 20–29 years of age, etc.), but in order to be ordinal, they require an obvious sequence. Categorical variables do not have an intrinsic order. “Green” “Brown” and “Orange” are non-ordinal, categorical variables. It is possible to transform categorical variables into binary variables, by making columns where only one of the answers is marked with a “1” (if that variable is present) and all the others are marked “0”. The form of the variables and their distribution will determine the type of statistical analysis possible. Data which must be transformed or cleaned is more prone to error in the cleaning or transformation process.

There are alternative ways to get similar information. For example, if you wanted to know the HIV status of each of your subjects, you could either test each one, or you could ask them. The tests cost more, however; they are less likely to give biased results. How you gather each variable will depend on your resources and will inform the limitations of your study.

Precision of a variable is the degree to which it is reproducible with nearly the same value each time it is measured. Precision has a very important influence on the power of a study. The more precise a measurement, the greater the statistical power of a given sample size to estimate mean values and test your hypotheses. In order to minimize random error in your data, and increase the precision of measurements, you should standardize your measurement methods; train your observers; refine any instruments you may use (such as calibrating instruments); automate instruments when possible (automated blood pressure cuff instead of manual); and repeat your measurements.

Accuracy of the variable is the degree to which it actually represents what it is intended to (Truth in the Universe). This influences the validity of the study. Accuracy is impacted by systemic error (bias). The greater the error, the less accurate the variable. Three common biases are: observer bias (how the measurement is reported); instrument bias (faulty function of an instrument); and subject bias (bad reporting or recall of the measurement by the study subject).

Validity is the degree to which a measurement represents the phenomenon of interest. When validating an abstract concept, search the literature or consult with experts so you can find an already validated data collection instrument (such as a questionnaire). This allows your results to be comparable to prior studies in the same area and strengthens your study methods.

Research manual

Simple research with limited resources does not need a research manual, just a protocol. Nor is there much need if the primary investigator is the only data gatherer and analyser. However, if several persons gather data, it is important that the data be gathered the same way each time.

Prevention is the most cost-effective activity that will ensure the integrity of data collection. A detailed and comprehensive research manual will standardize data collection. Poorly written manuals are vague and ambiguous.

The research manual is based off your protocol. The manual should spell out every step of the data collection process. It should include the name of each variable and specific details about how each variable should be collected. Contingents should be written. For example: “If the patient does not have a left arm, the blood pressure may be taken on the right arm. If the patient has no arms, leg blood pressures may be recorded, but put an ‘*’ beside the reading.” The manual should also include every step of the coding process. The coding manual should describe the name of each variable, and how it should be coded. Both the coder and the statistician will want to refer to that section. The coding section should describe how each variable will be entered into the database. Test the manual to make sure everyone understands it the same way.

Think about various ways a plan can go wrong. Write them down, with preferred solutions. There will always be unexpected changes. They should be added into the manual on a continuing basis. An on-going section where questions, problems and their solutions are all recorded will increase the integrity of your research.

Data collection methods

Before you start data collection, you need to ask yourself what data you are going to collect and how you are going to collect them. Which data, and the amount of data to be collected needs to be defined clearly. Different people (including several data collectors) should have a similar understanding of each variable and how it is measured. Otherwise, the data cannot be relied on. Furthermore, the decision to collect a piece of data needs to be justified. The amount of data collected for the study should be sufficient. A common mistake is to collect too much data without actually knowing what will be done with it. Researchers should identify essential data elements and eliminate those that may seem interesting but are not central to the study hypothesis. Collection of the latter type of data places an unnecessary burden on both the study participants and data collectors.

Different data collection approaches which are commonly used in the conduct of clinical research include questionnaire surveys, patient self-reported data, proxy/informant information, hospital and ambulatory medical records, as well as the collection and analysis of biologic samples. Each of these methods has its own advantages and disadvantages.

Surveys are conducted through administration of standardized or home-grown questionnaires, where participants are asked to respond to a set of questions as yes/no, or perhaps on a Likert type scale. Sometimes open-ended responses are elicited.

Medical records can be important sources of high-quality data and may be used either as the only source of data, or as a complement to information collected through other instruments. Unfortunately, due to the non-standardized nature of data collection, information contained in the medical records may be conflicting or of questionable accuracy. Moreover, the extent of documentation by different providers can vary significantly. These issues can make the construction or use of key study variables very difficult.

Collection of biological materials, as well as various imaging modalities, from the study participants are increasingly being used in clinical research. They need to be performed under standardized conditions, and ethical implications should be considered.

Data collection tool

You may need to collect information on paper. If you do, it is useful to have the actual code which should be entered into the computerized database written on the forms themselves (as well as in the manual). If you have access to an electronic database such as REDcap [a web-based application developed by Vanderbilt University to capture data for clinical research and create databases and projects [ 4 ], you can enter the data directly as you get them ( male ; female ) and the database will automatically convert the data into code. This reduces transcribing errors. Another common electronic database is Excel, which can also be used to manipulate the data. In spite of the advantages of recording data electronically, such as directly into REDcap or Excel, there are advantages to collecting and keeping the original data on paper. Paper data collection forms can be saved for audit or quality control. Furthermore, paper records cannot be remotely hacked. Moreover, if the anonymous electronic database is compromised or corrupted, you can re-create your database.

Data collectors

Good data collectors are worth gold. If they are thorough and ethical, you will get great data. If not, your data may be unusable. Make sure they understand research ethics, the need for protection of human subjects, and the privacy of data. Ideally, your data collectors would be blinded to the outcome of interest, to prevent bias. It is ok to blind data collectors to the research question, but they need to understand that collecting every variable the same way for each subject is essential to data integrity.

Data gatherers should be trained in advance of collecting any data. They need to understand informed consent and have the time to explain a study to the satisfaction of the subjects. The importance of conducting a dry run in an attempt to anticipate and address issues that can arise during data collection cannot be over-stated. It would even be worthwhile to pilot the research manual, to learn if everyone understands it the same way.

Data storage

Data collection, done right, protects the confidentiality of the subject as well as the data. Data must also be properly stored safely and securely. It is reasonable to back up your data in a different, secure, location. You do not want to go to all the trouble of creating a protocol, collecting your data, only to lose it, or have no way to analyse it!

There are many reasons to keep your data safe and secure. Obviously, you do not want to lose your data. You may wish to use the data again. For example, you may wish to combine it with other data for a different study. An additional reason is that you do not want your subjects to risk a ‘loss of privacy’. Still another reason is that institutions and governments may require you to store data for a specified number of years. Know how long you must keep your data. Keep it in a locked cabinet in a secure room, or behind an institutional firewall.

Furthermore, if you keep a cipher , that is, a connector between a subject and their study number, keep that cipher separate from the research data. That way, even if someone learns that subject 302 has an embarrassing condition, they will not know who subject 302 really is.

These days, almost everyone has access to computers and programs, locally or ‘in the cloud’. For statistical analysis, you will need to have your data in electronic form. If you started with paper, consider double entry (two data extractors for each record, then compare the two) for greater accuracy.

Tips on this topic and pitfalls to avoid

Hazard: no research manual.

- • No identified mechanism to document changes in procedures that may evolve over the course of the investigation.

- • Vague description of data collection instruments to be used in lieu of rigorous step-by-step instructions on administering tests

- • Only a partial listing of variables to be collected

- • Forgetting to put instructions on the data collection sheet about how to code the data when transferring to an electronic medium.

Hazard: no assistant training

- • Failure to adequately train data collectors

- • Failure to do a Dry Run/Failure to try enrolling a mock subject

- • Uncertainty about when, how and who should review gathered data.

Hazard: failure to understand data management

- • Data should be easy to understand, and the protocol good enough that another researcher can repeat the study.

- • Data audit: keep raw data and collected data

- • Failure to keep backups

Annotated bibliography

- 1. RCR Data Acquisition and Management. This online book is pretty comprehensive. http://ccnmtl.columbia.edu/projects/rcr/rcr_data/foundation/ (Accessed 2019 June 23)

- 2. Qualitative research – Wikipedia: en.wikipedia.org/wiki/Qualitative_research (Accessed 2019 June 23) – this is a good overview with references so you can delve deeper if you wish.

- 3. Qualitative Research: Definition, Types, Methods and Examples: https://www.questionpro.com/blog/qualitative-research-methods/ (Accessed 2019 June 23) – this is a good overview with references so you can delve deeper if you wish.

- 4. Qualitative Research Methods: A Data Collector's Field Guide: https://course.ccs.neu.edu/is4800sp12/resources/qualmethods.pdf (Accessed 2019 June 23) – another on-line resource about data collection.

Additional reading about statistical variables

- 1. Types of Variables in Statistics and Research: A List of Common and Uncommon Types of Variables. https://www.statisticshowto.datasciencecentral.com/probability-and-statistics/types-of-variables/

- 2. Research Variables: Dependent, Independent, Control, Extraneous & Moderator. https://study.com/academy/lesson/research-variables-dependent-independent-control-extraneous-moderator.html

- 3. Knatterud GL. Rockhold FW. George SL. Barton FB. Davis CE. Fairweather WR. Honohan, T. Mowery R. O'Neill R. (1998). Guidelines for quality assurance in multicenter trials: a position paper. Controlled Clinical Trials, 19:477–493.

- 4. Whitney CW. Lind BK. Wahl PW. (1998). Quality assurance and quality control in longitudinal studies. Epidemiologic Reviews, 20 [ 1 ]: 71–80.

Additional relevant information to consider

Consider who owns the data before and after collection (this brings up questions of consent, privacy, sponsorship and data-sharing, most of which are beyond the scope of this paper).

Authors' contribution

Authors contributed as follow to the conception or design of the work; the acquisition, analysis, or interpretation of data for the work; and drafting the work or revising it critically for important intellectual content: ES contributed 70%; VT, MJ and HS contributed 10% each. All authors approved the version to be published and agreed to be accountable for all aspects of the work.

Declaration of competing interest

The authors declared no conflicts of interest.

- Primary vs Secondary Data:15 Key Differences & Similarities

- Data Collection

In a time when data is becoming easily accessible to researchers all over the world, the practicality of utilizing secondary data for research is becoming more prevalent, same as its questionable authenticity when compared with primary data.

These 2 types of data, when considered for research is a double-edged sword because it can equally make a research project as well as it can mar it.

In a nutshell, primary data and secondary data both have their advantages and disadvantages. Therefore, when carrying out research, it is left for the researcher to weigh these factors and choose the better one.

It is therefore important for one to study the similarities and differences between these data types so as to make proper decisions when choosing a better data type for research work.

What is Primary Data?

Primary data is the kind of data that is collected directly from the data source without going through any existing sources. It is mostly collected specially for a research project and may be shared publicly to be used for other research

Primary data is often reliable, authentic, and objective in as much as it was collected with the purpose of addressing a particular research problem. It is noteworthy that primary data is not commonly collected because of the high cost of implementation.

A common example of primary data is the data collected by organizations during market research, product research, and competitive analysis. This data is collected directly from its original source which in most cases are the existing and potential customers.

Most of the people who collect primary data are government-authorized agencies, investigators, research-based private institutions, etc.

Read More: Primary Data: Definition, Examples & Collection Techniques

- Primary data is specific to the needs of the researcher at the moment of data collection. The researcher is able to control the kind of data that is being collected.

- It is accurate compared to secondary data. The data is not subjected to personal bias and as such the authenticity can be trusted.

- The researcher exhibits ownership of the data collected through primary research . He or she may choose to make it available publicly, patent it, or even sell it.

- Primary data is usually up to date because it collects data in real-time and does not collect data from old sources.

- The researcher has full control over the data collected through primary research . He can decide which design, method, and data analysis techniques to be used.

- Primary data is very expensive compared to secondary data. Therefore, it might be difficult to collect primary data.

- It is time-consuming.

- It may not be feasible to collect primary data in some cases due to its complexity and required commitment.

What is Secondary Data?

Secondary data is data that has been collected in the past by someone else but made available for others to use. They are usually once primary data but become secondary when used by a third party.

Secondary data are usually easily accessible to researchers and individuals because they are mostly shared publicly. This, however, means that the data are usually general and not tailored specifically to meet the researcher’s needs as primary data does.

For example, when conducting a research thesis, researchers need to consult past works done in this field and add findings to the literature review. Some other things like definitions and theorems are secondary data that are added to the thesis to be properly referenced and cited accordingly.

Some common sources of secondary data include trade publications, government statistics, journals, etc. In most cases, these sources cannot be trusted as authentic.

Read More: What is Secondary Data? + [Examples, Sources, & Analysis]

- Secondary data is easily accessible compared to primary data. Secondary data is available on different platforms that can be accessed by the researcher.

- Secondary data is very affordable. It requires little to no cost to acquire them because they are sometimes given out for free.

- The time spent on collecting secondary data is usually very little compared to that of primary data.

- Secondary data makes it possible to carry out longitudinal studies without having to wait for a long time to draw conclusions.

- It helps to generate new insights into existing primary data.

- Secondary data may not be authentic and reliable. A researcher may need to further verify the data collected from the available sources.

- Researchers may have to deal with irrelevant data before finally finding the required data.

- Some of the data is exaggerated due to the personal bias of the data source.

- Secondary data sources are sometimes outdated with no new data to replace the old ones.

Here are 15 Differences between Primary and Secondary Data

Primary data is the type of data that is collected by researchers directly from main sources while secondary data is the data that has already been collected through primary sources and made readily available for researchers to use for their own research.

The main difference between these 2 definitions is the fact that primary data is collected from the main source of data, while secondary data is not.

The secondary data made available to researchers from existing sources are formerly primary data that was collected for research in the past. The availability of secondary data is highly dependent on the primary researcher’s decision to share their data publicly or not.

An example of primary data is the national census data collected by the government while an example of secondary data is the data collected from online sources. The secondary data collected from an online source could be the primary data collected by another researcher.

For example, the government, after successfully the national census, shares the results in newspapers, online magazines, press releases, etc. Another government agency that is trying to allocate the state budget for healthcare, education, etc. may need to access the census results.

With access to this information, the number of children who needs education can be analyzed, and hard to determine the amount that should be allocated to the education sector. Similarly, knowing the number of old people will help in allocating funds for them in the health sector.

The type of data provided by primary data is real-time, while the data provided by secondary data is stale. Researchers are able to have access to the most recent data when conducting primary research , which may not be the case for secondary data.

Secondary data have to depend on primary data that has been collected in the past to perform research. In some cases, the researcher may be lucky that the data is collected close to the time that he or she is conducting research.

Therefore, reducing the amount of difference between the secondary data being used and the recent data.

Researchers are usually very involved in the primary data collection process, while secondary data is quick and easy to collect. This is due to the fact that primary research is mostly longitudinal.

Therefore, researchers have to spend a long time performing research, recording information, and analyzing the data. This data can be collected and analyzed within a few hours when conducting secondary research.

For example, an organization may spend a long time analyzing the market size for transport companies looking to talk into the ride-hailing sector. A potential investor will take this data and use it to inform his decision of investing in the sector or not.

- Availability

Primary data is available in crude form while secondary data is available in a refined form. That is, secondary data is usually made available to the public in a simple form for a layman to understand while primary data are usually raw and will have to be simplified by the researcher.

Secondary data are this way because they have previously been broken down by researchers who collected the primary data afresh. A good example is the Thomson Reuters annual market reports that are made available to the public.

When Thomson Reuters collect this data afresh, they are usually raw and may be difficult to understand. They simplify the results of this data by visualizing it with graphs, charts, and explanations in words.

- Data Collection Tools

Primary data can be collected using surveys and questionnaires while secondary data are collected using the library, bots, etc. The differences between these data collection tools are glaring and can it be interchangeably used.

When collecting primary data, researchers lookout for a tool that can be easily used and can collect reliable data. One of the best primary data collection tools that satisfy this condition is Formplus.

Formplus is a web-based primary data collection tool that helps researchers collect reliable data while simultaneously increasing the response rate from respondents.

Primary data sources include; Surveys, observations, experiments, questionnaires, focus groups, interviews, etc., while secondary data sources include; books, journals, articles, web pages, blogs, etc. These sources vary explicitly and there is no intersection between the primary and secondary data sources.

Primary data sources are sources that require a deep commitment from researchers and require interaction with the subject of study. Secondary data, on the other hand, do not require interaction with the subject of study before it can be collected.